Top

Search

People also search for:

- Home

- What Is Predictive Analytics Software Development and How Does It Work?

In early 2025, a Houston energy company narrowly avoided $2.3 million in unplanned equipment downtime by deploying a predictive maintenance model that flagged a compressor failure 11 days before it occurred. The model was not sophisticated by current standards. It was trained on 18 months of sensor readings and maintenance logs that the company had been collecting for years without using. The data was already there. The capability to act on it was not.

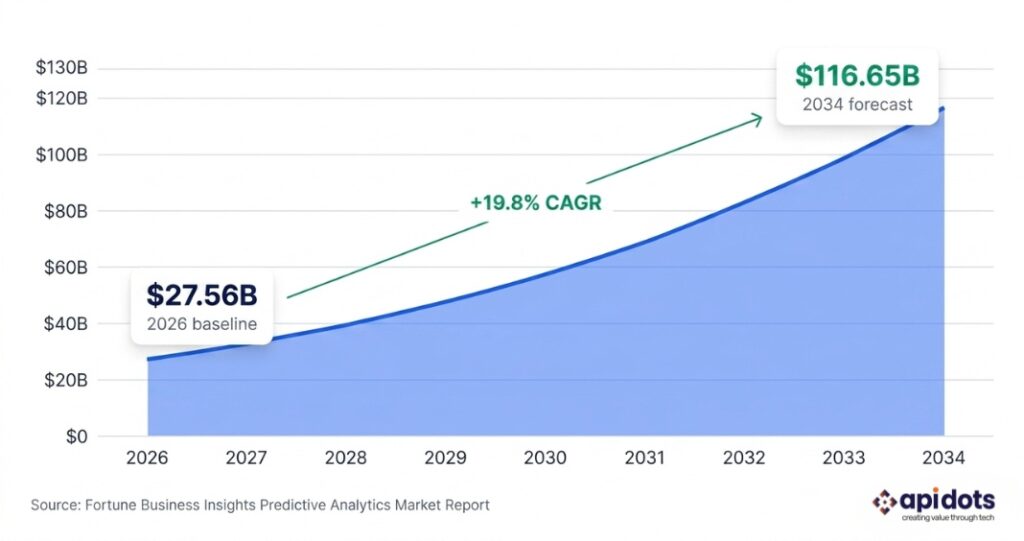

That gap between having data and acting on it predictively is the most expensive operational problem facing US businesses right now. The global predictive analytics market is forecast to grow from $27.56 billion in 2026 to $116.65 billion by 2034, per Fortune Business Insights. That trajectory is being driven not by large enterprises finally discovering AI, but by mid-market US companies in logistics, FinTech, healthcare, manufacturing, and retail waking up to the fact that their competitors already have systems that see forward and they are still looking backwards.

This guide explains exactly what predictive analytics software development involves, how the development process works step by step, where it is being built across the US, what it costs, and how to evaluate a development partner who will actually deliver a production system rather than an impressive demo. Every section is written for decision-makers who need to act on this, not just understand it.

For the full technical context on the ML development lifecycle, read the Machine Learning Software Development guide which covers architecture, model selection, and deployment infrastructure in depth.

Predictive analytics is the practice of using historical data, statistical algorithms, and machine learning models to forecast future outcomes. Predictive analytics software development is the process of building a custom system that does this for your specific business context, integrates with your existing data infrastructure, and runs in production serving real decisions in real time.

That distinction matters more than most business leaders realise when they first start evaluating their options. There is a meaningful difference between three things that often get conflated in vendor conversations:

| Three things that are NOT the same: Off-the-shelf analytics platforms (Tableau, Power BI, DataRobot): These are excellent tools for visualising existing data and building basic predictive models through a GUI. They are fast to deploy, require no engineering, and are appropriate when your use case is generic enough to fit within their capabilities. The limitation is precisely that: they are built for the average use case, not yours. AutoML and low-code ML tools: Tools like Google AutoML and AWS SageMaker Autopilot automate model selection and training. They reduce engineering effort significantly and are appropriate for well-defined tabular prediction problems with clean data. They break down when you need custom feature engineering, non-standard model architectures, or deep integration with operational systems. Custom predictive analytics software development: A bespoke system built by a development team around your specific data, your specific prediction problem, and your specific production environment. This approach is appropriate when the off-the-shelf options cannot accommodate your data structure, your latency requirements, your regulatory constraints, or the complexity of the decision the model needs to support. |

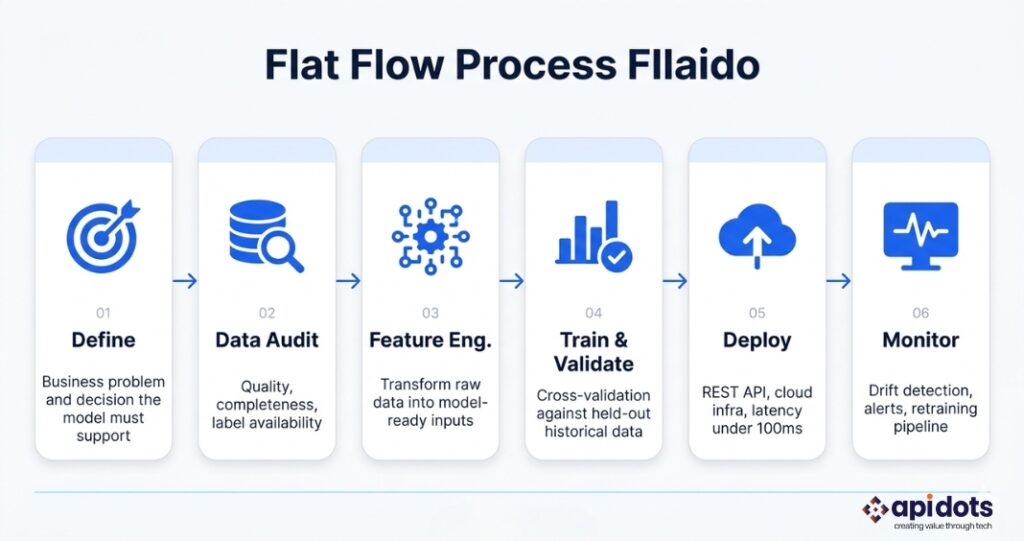

The full development lifecycle for custom predictive analytics software typically covers six distinct phases: data engineering and pipeline construction, model selection and training, validation and back-testing against historical outcomes, production deployment with appropriate cloud infrastructure, monitoring for model drift and performance degradation, and retraining as new data accumulates and the underlying patterns in the data evolve.

Understanding where your situation sits on this spectrum is the first decision the team at apidots.com makes with every client. Our AI and ML development service is designed specifically for US businesses that have moved past exploratory analytics and need a production-grade predictive system that runs reliably in their operational environment.

The development process is frequently misrepresented in vendor proposals as a linear sequence of tidy milestones. In practice, it is iterative, data-dependent, and full of decision points that cannot be resolved before the team has actually seen the data. Here is what an honest account of the process looks like, based on the approach the team at apidots.com uses with US clients across FinTech, healthcare, logistics, and manufacturing:

The most expensive mistake in predictive analytics development is building a technically correct model that answers the wrong question. Before any data is touched, the development team needs to understand precisely what decision the model’s output will inform, who will act on that prediction, and what the cost of a false positive versus a false negative is in this specific context. A demand forecasting model for a Chicago distribution company needs to optimise for different things than a fraud detection model for a New York FinTech firm, even if both involve classification at the surface level.

In a Dallas retail company that came to apidots.com for a demand forecasting system, the discovery sprint revealed that 40% of historical sales records were missing geographic tags due to a legacy POS system migration three years prior. Building the model on that data without addressing the gap would have produced forecasts that systematically underestimated demand in suburban locations. Data audits are not optional or cursory — they are the most important work done before any model training begins. The audit covers completeness, label availability, class balance, time series continuity, and whether the training data actually reflects the distribution of data the model will encounter in production.

Feature engineering is the process of transforming raw data into the numerical representations a model can learn from. It is where domain knowledge intersects with statistical thinking, and it is where most of the difference between a good model and a great one is created. Model selection follows from the problem definition and data characteristics: gradient boosting models are interpretable and reliable on tabular data, which makes them the right choice for regulated industries in New York and Boston where explainability is a compliance requirement. Deep learning architectures are appropriate for unstructured data problems in computer vision or NLP, but they require substantially more training data and infrastructure to deploy reliably.

Training a model is computationally intensive but conceptually straightforward. The more important work is validation: testing the model against data it has never seen, using cross-validation techniques that simulate the temporal structure of production deployment. Back-testing against historical outcomes that were not included in training data is essential for any predictive analytics system used in a business context. A model that looks excellent on training data but has not been rigorously tested against held-out historical periods is not production-ready regardless of its metric scores.

Production deployment means building the cloud infrastructure that serves model predictions to real users or systems in real time. This includes API design, latency requirements under load, containerisation using Docker and Kubernetes, and integration with the client’s existing operational systems. The cloud services architecture decisions made here directly determine whether the system can handle the traffic volumes and uptime requirements of a production US enterprise environment. A model that serves predictions in 400 milliseconds under test conditions but degrades to 4 seconds under concurrent production load is not fit for purpose.

Model drift occurs when the statistical properties of the data the model encounters in production diverge from the data it was trained on. This is not a failure state — it is an expected feature of any model operating in a real-world environment where customer behaviour, market conditions, and operational patterns change over time. An energy company in Houston that trains a predictive maintenance model during summer operating conditions will encounter different sensor distributions in winter. A New York credit risk model trained before a Federal Reserve rate cycle will behave differently in the rate environment that follows. Every production predictive analytics system needs automated drift monitoring, defined performance thresholds that trigger alerts, and a documented retraining process. Without these, the model degrades silently and the business decision-makers using its outputs lose trust in the system before anyone has diagnosed the actual problem.

For a detailed breakdown of how MLOps infrastructure connects to the full ML lifecycle, the Machine Learning Software Development guide covers production architecture, monitoring frameworks, and retraining pipeline design in technical detail.

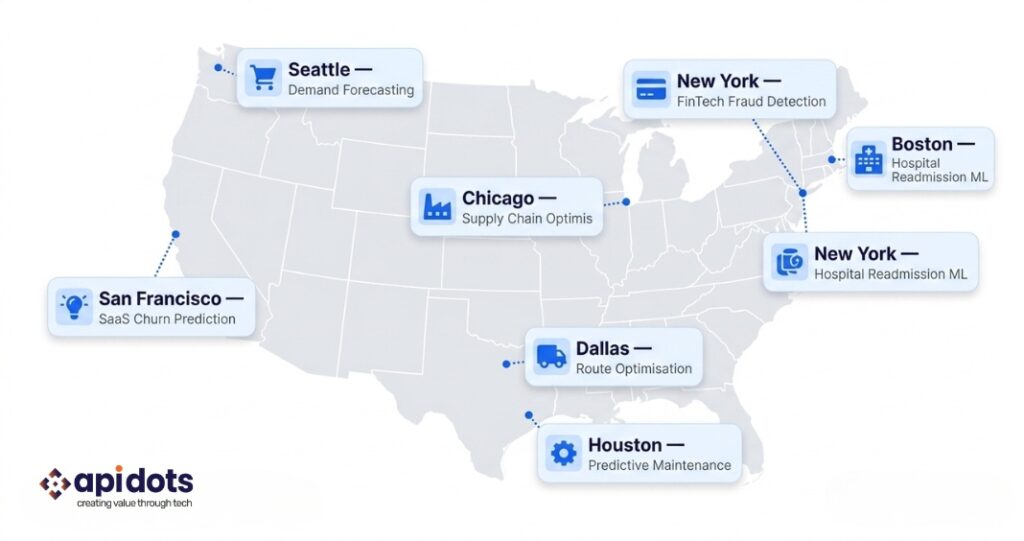

The US market for custom predictive analytics software is geographically distributed in ways that reflect industry concentration rather than general technology adoption. Understanding where the demand is strongest and what specific problem each market is solving is a useful context for any US business evaluating where their project sits in the competitive landscape.

New York is the most active market for FinTech-specific predictive analytics, driven by Wall Street’s decades-long investment in quantitative methods and the concentration of FCA-adjacent regulated financial services firms across Manhattan and Jersey City. Fraud detection, credit risk modelling, algorithmic trading signal generation, and loan default prediction are the dominant use cases. The regulatory environment in New York is the most demanding in the country for ML systems used in financial decision-making: FINRA oversight, CCPA data obligations for consumer-facing models, and internal model risk management frameworks all impose requirements that a predictive analytics development partner must build from day one. Our FinTech application development services address these requirements as standard.

California, specifically the San Francisco Bay Area and Los Angeles, is where enterprise SaaS companies and AdTech firms are deploying predictive analytics at scale for customer lifetime value modelling, churn prediction, and programmatic advertising optimization. San Francisco’s biotech cluster, particularly around Mission Bay and South San Francisco, is also a significant market for drug discovery prediction and clinical trial outcome modelling. The data volumes in these contexts are large, the model architectures are complex, and the cloud infrastructure requirements are demanding.

Texas is the most diverse predictive analytics market in the country by industry sector. Houston’s energy sector is deploying predictive maintenance models across upstream oil and gas equipment, pipeline integrity monitoring, and grid management for renewable energy assets. Austin’s growing tech startup ecosystem is commissioning churn prediction and revenue forecasting models at a rate that has made it one of the fastest-growing ML development markets in the country. Dallas logistics and supply chain companies represent a third distinct use case cluster: route optimisation, carrier performance prediction, and demand forecasting for retail distribution networks.

Chicago’s manufacturing and supply chain concentration makes it the strongest Midwest market for ML-powered quality control, predictive equipment maintenance, and commodities trading analytics. Our work with Chicago-area finance and manufacturing clients reflects the specific requirements of those sectors: interpretable models, integration with legacy operational systems, and deployment architectures that satisfy internal IT governance frameworks.

Boston is the leading US market for HealthTech predictive analytics, driven by the concentration of academic medical centres, life sciences companies, and digital health startups in the Cambridge and Longwood Medical Area corridor. Hospital readmission prediction, clinical decision support, and patient deterioration early warning systems are the dominant use cases. These systems require HIPAA-compliant data architecture, integration with Epic and Cerner EHR systems, and model interpretability that satisfies clinical staff and hospital ethics committees simultaneously.

Florida’s insurance sector, centred on Tampa and Miami, is a significant market for actuarial risk modelling and real estate demand forecasting. Seattle remains the country’s leading market for e-commerce demand forecasting and cloud-native ML deployments, anchored by Amazon’s internal ML platform investments and the ecosystem of e-commerce businesses that have grown around it. The team at apidots.com serves clients across all of these markets from a single production-capable team with sector experience in each.

A Chicago-based B2B logistics company operating across the Midwest, serving retail and manufacturing clients, processing approximately 14,000 deliveries per month across Illinois, Indiana, Ohio, and Michigan. The business had 180 employees and had been operating with a rule-based routing system for six years. The system had worked adequately when their delivery volume was under 5,000 per month, but at scale it was generating a 22% on-time delivery failure rate that was costing the business approximately $340,000 per month in penalty clauses under SLA contracts with their largest retail clients.

The rule-based system made routing decisions based on fixed parameters: distance, vehicle capacity, and a static carrier preference ranking updated quarterly. It did not account for weather patterns, road event data, carrier performance variability by route or day of week, or the demand clustering that characterised their retail clients’ ordering behaviour. The operations team knew the system was underperforming but had no way to act on that knowledge without rebuilding the routing logic from scratch, which their internal IT team did not have the capacity to do.

The engagement began with a two-week discovery sprint that audited 18 months of delivery records, carrier performance logs, weather data, and road event feeds. The audit revealed that 73% of delivery failures were concentrated in four carrier-route combinations that the existing rule-based system was continuing to use because the quarterly ranking update had not captured their recent performance degradation. This finding alone was actionable before any model was trained. API DOTS built a gradient boosting model trained on the full 18-month dataset, incorporating engineered features for weather impact by route corridor, carrier performance trends with a 30-day rolling window, and demand pattern clustering by client and day of week. The model was deployed as a REST API integrated with the client’s existing transport management system on AWS infrastructure, with prediction latency under 85 milliseconds and real-time monitoring via a cloud-native observability stack.

On-time delivery rate improved from 78% to 93% in 11 weeks of production operation. Monthly penalty exposure dropped from $340,000 to $87,000, a reduction of $253,000 per month. In the second phase of the engagement, the model was extended to predict carrier capacity shortfalls 72 hours in advance, allowing the operations team to pre-book alternative capacity before the failure occurred rather than after. That capability was not in the original brief — it emerged from the monitoring data that showed where the first model’s uncertainty estimates were highest.

| “The problem was not the data. The data was there. The problem was that nobody had built a system to turn 18 months of delivery history into a forward-looking decision. That is what predictive analytics software development actually delivers.” |

For a related example in inventory and supply chain, read: Smart AI Inventory Management for Growing Businesses.

| Building a predictive analytics system for your US business? The team at apidots.com offers a free discovery call to assess your data, define your use case, and map a realistic delivery timeline. No pitch. Just honest answers. Book a Free Discovery Call → |

The generic benefits list — ‘better decisions, cost savings, competitive advantage’ — is not useful for a US CTO trying to build an internal business case. Here are the actual outcomes that predictive analytics software development delivers, tied to specific US market contexts and real numbers.

The most direct value of predictive analytics is the elimination of reactive fire-fighting. A manufacturing plant in Detroit that repairs equipment after failure spends an average of 3 to 5 times more per maintenance event than a plant that identifies and addresses degradation before failure occurs. McKinsey research shows that AI-driven inventory and supply chain management reduces costs by 20 to 30% for organisations that deploy predictive models at an operational level rather than just an analytical one. The distinction is important: analytics tells you what happened, predictive analytics tells you what to do before it happens.

New York FinTech firms operating in consumer lending and payment processing have reduced fraud losses by 25 to 40% using transaction-level predictive models that flag anomalous patterns in real time. The same pattern holds in Chicago’s commodities trading firms and Miami’s insurance underwriting desks. The business case is straightforward: every dollar of fraud prevented is a dollar of pure margin recovery, and the models that prevent it become more accurate as production data accumulates over time. A rule-based fraud system is static. A trained predictive model gets better.

SaaS businesses in San Francisco and Austin with annual recurring revenue above $5 million have measurable churn prediction ROI that compounds directly with revenue scale. A model that identifies at-risk accounts 30 days before cancellation with 74% precision gives a customer success team enough lead time to intervene meaningfully. The revenue impact depends entirely on the intervention rate and the average contract value, but for a SaaS business with $10 million ARR and 8% annual churn, even a 20% reduction in churn attributable to predictive intervention represents $160,000 in annual recurring revenue retained.

Finance teams at mid-market US companies that replace spreadsheet-based revenue forecasting with machine learning models consistently reduce forecast variance by 20 to 30%, according to CFO survey data cited in Gartner’s analytics research. The operational benefit is real: tighter forecasts mean more confident hiring decisions, more disciplined procurement, and more credible investor reporting. For pre-IPO companies in New York and California, forecast accuracy is a material factor in valuation conversations.

Ohio and Michigan manufacturing firms using predictive quality control models report defect rate reductions of 15 to 25% within six months of production deployment. The model identifies the process parameters most predictive of defect occurrence and flags batches for inspection before they leave the line rather than after. For a contract manufacturer with $50 million in annual revenue, a 20% defect rate reduction translates to between $800,000 and $1.5 million in annual rework and warranty cost elimination. The AI and ML development capabilities at apidots.com cover all of these use cases with production-grade delivery across US locations.

The US market for ML development vendors ranges from boutique one-person consultancies to large professional services firms that subcontract the actual ML work to offshore teams. Identifying the difference requires asking specific questions, not reviewing polished portfolio pages. Here is the framework the team at apidots.com recommends to any US business evaluating vendors.

Any vendor that responds to a predictive analytics brief with a fixed price and timeline without first seeing your data is either incompetent or indifferent to the outcome. The right ML architecture for your problem depends entirely on what your data actually contains: its completeness, its temporal structure, the quality of its labels, and whether it reflects the distribution of inputs the model will encounter in production. A proposal written before the data audit is a guess dressed as expertise.

Ask to see a specific production system in operation. Not a portfolio page, not a demo environment, and not a Jupyter notebook with impressive metric scores. A URL, a real user base, and evidence of how the monitoring and retraining infrastructure works. Most US ML vendors cannot show you this because they have not built it. apidots.com can. Our full services overview reflects a track record of production deployments rather than prototypes.

HealthTech predictive analytics in Massachusetts and California must be HIPAA-compliant from the first line of architecture. FinTech models used in credit decisions in New York must satisfy model risk management requirements under FINRA and OCC guidelines. Consumer-facing predictive models in California must account for CCPA data rights, including the right to opt out of automated decision-making. A vendor who treats compliance as an afterthought or bills it as a separate engagement after the model is built is not a responsible partner for a US business operating in a regulated sector.

Any proposal that describes the engagement ending at deployment is describing a liability, not a solution. Every production predictive model needs monitoring infrastructure, defined drift thresholds, and a retraining process. If these are not in the initial scope, they will appear as an unplanned engagement six to nine months after launch when the model’s performance has degraded. How to choose the right software development company covers this evaluation framework across the broader software development context.

API DOTS is a production ML and predictive analytics development firm. The distinction matters: we do not produce proofs of concept, build notebook demonstrations, or deliver model files without the deployment and monitoring infrastructure to run them. Every engagement results in a working system in production, owned by the client, with full documentation and operational runbooks.

Every apidots.com engagement follows the same structure regardless of US location or industry sector. A data audit sprint runs before any model architecture is proposed. The audit determines whether the ML approach is justified by the data — and if it is not, we say so rather than taking the engagement. We have turned down projects in New York, Texas, and California where the data quality made the proposed ML approach unworkable. That honesty is not common in the US ML vendor market, but it is the only approach that produces reliable production outcomes.

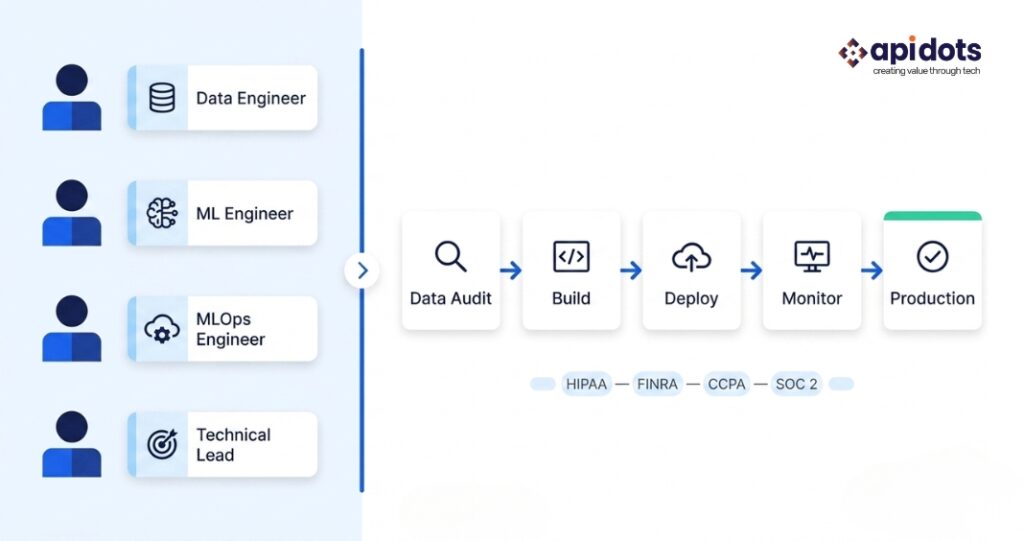

The team structure is consistent across projects: a data engineer responsible for pipeline construction and data quality remediation, an ML engineer responsible for model architecture and training, an MLOps engineer responsible for deployment infrastructure and monitoring, and a technical lead who owns the client relationship and the project’s business outcome definition. US businesses get a full team from day one, not a single contractor who builds in isolation.

Compliance-aware development is standard for all US regulated industry clients. HIPAA data handling architecture for healthcare clients, FINRA-aligned model documentation for financial services, and CCPA compliance for consumer-facing models in California are built into the delivery process, not added as afterthoughts. Our healthcare software development and FinTech development capabilities reflect this sector-specific depth.

Post-deployment monitoring and retraining are included in every standard engagement, not sold as a support tier. The production system we deliver includes automated drift monitoring, performance threshold alerts, a documented retraining process, and a defined cadence for model review. Every engagement also delivers model cards, data lineage documentation, API specifications, and operational runbooks. Whether you are in Austin, Atlanta, or San Jose, the delivery model is the same. Explore the full AI and ML development service or read the complete Machine Learning Software Development guide to understand how apidots.com approaches projects from data strategy through production.

| Ready to build predictive analytics software for your US business?Whether you are in New York, Chicago, Houston, Los Angeles, or Seattle — the team at apidots.com delivers production predictive analytics systems built around your data and your business outcomes. No demos. No notebooks. Production systems only. Contact API DOTS for a Free Consultation → |

What is the difference between predictive analytics software and a standard analytics dashboard?

A standard analytics dashboard reports on what has already happened: revenue last quarter, customer churn last month, defect rates last week. It is a rear-view mirror. Predictive analytics software builds a statistical model that uses historical patterns to forecast what will happen next: which customers are likely to churn in the next 30 days, which equipment is likely to fail in the next two weeks, which orders are most likely to be delayed. The output of a predictive analytics system is an actionable forecast or probability score, not a historical summary. The infrastructure difference is also significant: dashboards can be built on standard BI tooling, while predictive analytics software requires a trained ML model, a feature engineering pipeline, production deployment infrastructure, and ongoing monitoring. The two are not interchangeable, and confusing them is one of the most common reasons US businesses underestimate what a predictive analytics project actually involves.

How much does custom predictive analytics software development cost for a US business?

Custom predictive analytics software development for US businesses typically ranges from $40,000 to $120,000 for a focused single-use-case production system, delivered over 8 to 16 weeks. More complex systems involving multiple prediction models, custom data pipelines, regulatory compliance requirements, or integrations with multiple enterprise systems can range from $120,000 to $350,000 or more depending on scope. These figures reflect fully-loaded project delivery including data engineering, model development, deployment infrastructure, monitoring, and documentation. For context, the fully-loaded annual cost of a single senior ML engineer in San Francisco exceeds $300,000. For most US SMEs with defined project scope, the development partnership model delivers a complete production system at lower total cost than one year’s salary for one engineer. The AI software development cost guide provides a detailed breakdown of cost drivers across different project types.

How long does it take to build a predictive analytics system from scratch?

A focused MVP predictive analytics system — one use case, clean or cleanable data, a single model, REST API deployment, and basic monitoring — typically takes 8 to 12 weeks from kick-off to production deployment with the team at apidots.com. More complex systems that require significant data engineering work, custom feature stores, multi-model architectures, or deep enterprise system integration take 16 to 24 weeks. The single biggest variable in timeline is data readiness: businesses that arrive with clean, labelled, well-structured historical data deploy significantly faster than those whose first phase involves substantial data engineering and quality remediation. The discovery sprint that apidots.com runs at the start of every engagement is specifically designed to surface data readiness issues before the project timeline is committed.

What data do I need to start a predictive analytics software development project?

The minimum viable dataset for a predictive analytics project depends on the specific prediction problem, but a useful rule of thumb is 12 to 24 months of historical records that include both the input features your model will use and the outcomes you want it to predict. For a customer churn model, that means CRM activity data, product usage logs, and a record of which customers actually churned and when. For a predictive maintenance model, that means sensor readings and a record of actual equipment failures. For a demand forecasting model, that means sales history, ideally with external variables like seasonal trends and promotions. Data quality matters more than quantity: a complete, well-structured 12-month dataset is more useful than a messy, partially labelled 36-month one. The data audit that begins every apidots.com engagement is specifically designed to assess what you have and what remediation is needed before model training begins.

Which US industries benefit most from custom predictive analytics software development?

The industries with the strongest documented ROI on custom predictive analytics in the US are financial services, healthcare and life sciences, logistics and supply chain, manufacturing, and retail and e-commerce. Financial services firms in New York benefit from fraud detection, credit risk, and portfolio optimisation models. Healthcare organisations in Boston and Chicago benefit from readmission prediction, clinical decision support, and patient deterioration early warning. Logistics firms across the Midwest benefit from route optimisation and carrier performance prediction. Manufacturers in Michigan and Ohio benefit from predictive quality control and equipment maintenance models. Retailers and e-commerce businesses in California and Washington benefit from demand forecasting and customer lifetime value models. The common thread across all of these is that each sector has large volumes of historical operational data and specific decisions where a reliable forward-looking forecast has measurable commercial value. The offshore software development and ML delivery guide covers how US businesses can access production ML capability efficiently regardless of their internal engineering capacity.

We engineer and integrate custom ML software solutions end-to-end. Delivering predictive models, data insights, and measurable business results.

Get ML Development Services

I’m a digital marketer with experience in SEO, content strategy, and online brand growth. I specialize in creating optimized content, improving website rankings, building high-quality backlinks, and driving traffic through effective digital marketing strategies. I enjoy helping businesses strengthen their online presence and turn visitors into customers.