Top

Search

People also search for:

- Home

- Why Most Machine Learning Projects Fail in Seattle — And How the Right ML Development Company Avoids It

Most machine learning projects in Seattle don’t fail at deployment — they fail before the first model is even trained.

For any business evaluating an ML development company in Seattle, the biggest risk isn’t choosing the wrong algorithm — it’s starting with the wrong foundation.

“What happens when a patient’s referral is written in Spanish?“

The room goes quiet. The model has never seen a non-English referral. The training dataset, fourteen months of historical intake records, contained almost entirely English-language documents. Not because non-English referrals did not exist — they made up 23% of actual incoming volume — but because the data engineer who built the ingestion pipeline had filtered out non-standard format entries to speed up the cleaning process. Nobody defined “non-standard.” Nobody caught it. The filter ran silently for the entire data collection period.

Sixteen weeks. Functionally useless for nearly a quarter of the patient population the platform was built to serve.

This is not an invented scenario. Variations of it happen in Seattle ML projects every quarter. A similar failure plays out in Bellevue FinTech companies whose fraud models were never tested against transaction patterns from newer payment rails. In Redmond enterprise software companies whose sentiment models were trained on five-star review data and deployed against support tickets. In South Lake Union logistics startups whose demand forecasting models learned from pre-pandemic purchasing behaviour and were deployed into a market that no longer exists.

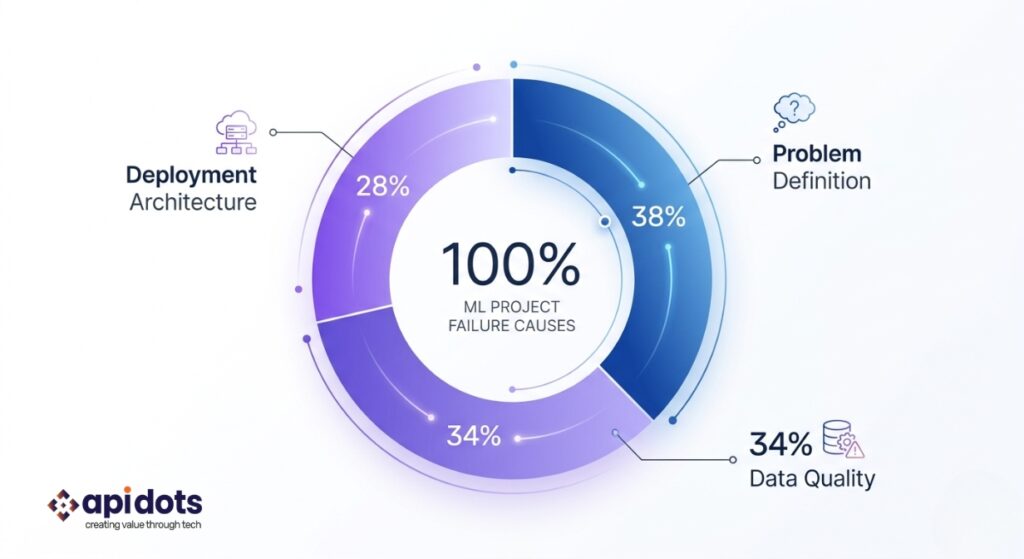

None of these failures happened at the model. None of them happened at deployment. Every single one was locked in during the first two weeks of the engagement, when three specific decisions were made — badly, quickly, and without the information needed to make them correctly.

This blog explains those three decisions, why Seattle’s ML market makes them harder than most buyers expect, and what the companies that actually ship production ML systems do differently from the ones that spend $100,000 producing a notebook.

There is a specific kind of conversation that happens in the first meeting between a Seattle technology company and an ML development vendor. The company’s CTO or product director describes what they want: “We want to use ML to reduce customer churn.” “We need better fraud detection.” “We want to personalise the experience for different user segments.” The vendor nods, asks a few questions about data volume and timeline, and begins scoping a project.

What the CTO has described is a direction. What an ML development project requires is a problem. These are not the same thing, and the gap between them is not a small technical detail. It is the difference between a specification that can be built and one that cannot. “Reduce customer churn” has no definition of done. There is no way to know when the system has worked, which means there is no way to know when it has failed. In the absence of a failure definition, the model will eventually be deployed, performance will drift, and nobody will be able to say with confidence whether the system is working or not. This is how $120,000 projects produce dashboards that nobody looks at six months later.

The reason this failure is endemic to Seattle specifically has to do with the enterprise software culture that dominates the region. Amazon and Microsoft both operate with large, well-resourced product organisations that can afford directional thinking at the executive level. When a VP at Amazon says “improve customer experience,” there are product managers, data scientists, and engineering leads who translate that direction into a precise specification through a structured internal process—the six-pager document, the PR/FAQ, and the working backwards methodology. That translation infrastructure exists inside Amazon. It does not exist in most of the Series A and B companies commissioning ML development from external vendors. When those companies arrive at a vendor meeting with a direction rather than a problem, they expect the vendor to do the translation. Most vendors do it — badly, quickly, and without enough information to get it right — because starting development feels more productive than spending a week writing a specification.

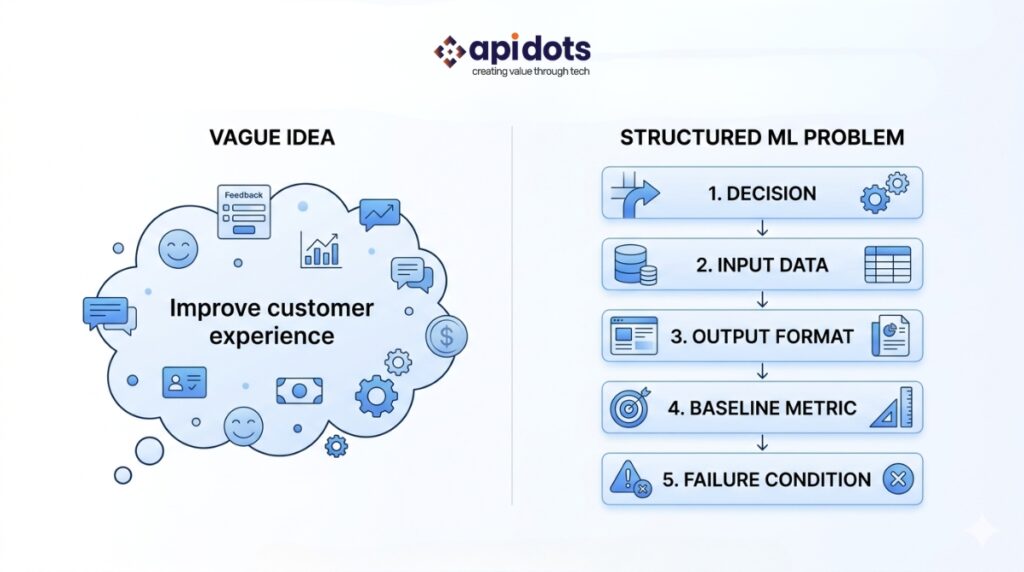

A precise ML problem statement contains five elements. The specific decision the model needs to support. The inputs are genuinely available at the moment that decision must be made. The output format the decision requires in the system it will integrate with. The performance baseline the model needs to beat to justify the investment. And the explicit definition of failure in production — the condition under which the business will conclude the system is not working and intervene. “We want to predict which customers will churn in the next 30 days, using transactional and behavioral data available at the time of prediction, outputting a risk score between 0 and 1 that our CRM automation layer can act on, beating our current rule-based system’s 61% precision, with the failure condition defined as dropping below 65% precision on real customer cohorts after 60 days in production” — that is a precise ML problem statement. Writing it takes three days of structured conversation between the client’s product lead and a ML development partner who knows what questions to ask.

The cost of skipping this step is concrete and documented. A Bellevue-based enterprise SaaS company commissioned ML development to “improve lead scoring.” The vendor built a gradient boosting model trained on CRM data and delivered it after twelve weeks with a strong test-set performance. When the sales team tried to use it, the model’s outputs were operationally meaningless: the CRM data had never been audited for field consistency, and the same lead type could be categorised in six different ways depending on which sales manager entered it. The model had learned to predict label inconsistency. $110,000 spent. No production deployment. The precise problem — defining what a “qualified lead” meant in terms the model could learn from — had never been written down before the first sprint began.

The team at apidots.com runs a problem definition workshop as the mandatory first step of every engagement. The output is a five-element problem statement signed off by both the client’s product lead and the apidots.com technical lead before any development work begins. This step takes three to five days. It is not negotiable. It is also what AI2 — the Allen Institute for AI in South Lake Union, one of the world’s most-cited applied ML research organisations — applies to every research project they publish: the problem statement precedes the method, always. Production ML is no different. The complete ML development methodology that apidots.com follows is built on this principle from the first day.

The opening scenario in this blog — the Spanish-language referrals, the filtering pipeline, the model that was 91% accurate on a test set and functionally useless for 23% of real patients — is a data representativeness failure. Not a modelling failure. Not a deployment failure. A data audit failure that was invisible until week sixteen because nobody ran a data audit.

This is the second decision made in the first two weeks of a Seattle ML project, and it is the one where the gap between notebook ML vendors and production ML vendors is most clearly visible. Most Seattle ML vendors do one of three things when they receive training data from a client: they accept the client’s description of it at face value, they run a brief exploratory analysis focused on volume and technical format, or they discover problems at model evaluation time and recommend “collecting more data.” None of these is a data audit. A data audit is a structured investigation of whether the data is representative of the real-world population the model will encounter after deployment — not whether it is large enough, not whether it is formatted correctly, but whether it reflects the actual distribution of inputs the system will be asked to process.

Seattle’s enterprise data environment makes this failure particularly expensive. The city’s dominant companies are data-rich. AWS S3 buckets with years of operational records, Redshift warehouses full of historical transactions, data lakes that were built by serious engineering teams and feel comprehensive by every technical measure. The volume is real. The representativeness is not always real, and the gap between the two is where Seattle ML projects go wrong. Three specific data problems kill the majority of projects that arrive at apidots.com after a previous engagement failed.

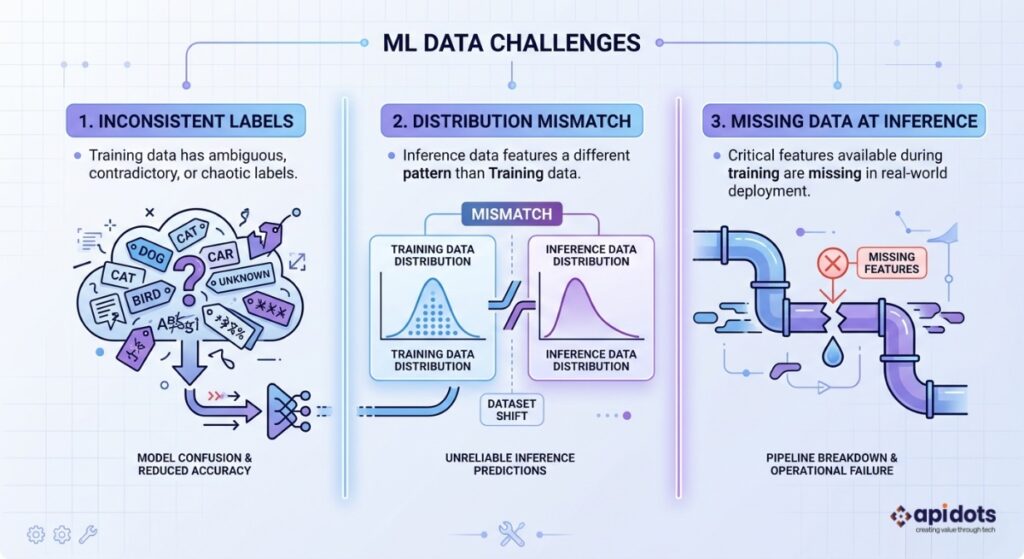

Label inconsistency. When the criteria for labelling training examples are applied differently by different people over time, the model learns to predict the label assignment process rather than the underlying phenomenon the label was meant to capture. A Redmond enterprise software company had two years of support ticket data labelled by severity — critical, high, medium, low. Over those two years, five different support managers had applied those labels, each with a slightly different interpretation of “critical.” The model trained on that data achieved 84% accuracy on the historical test set. In production, it systematically misclassified tickets handled by two of the five managers’ accounts because the label criteria those managers used were underrepresented in the training partition. The fix required re-labelling 40% of the training data with a calibrated protocol. Nobody had run an inter-annotator agreement analysis before the project began.

Distribution mismatch. The training data represents a population that no longer matches the one the model will encounter in production. A Seattle retail company trained a demand forecasting model on 2019 and 2020 purchasing behaviour and deployed it in 2023. Consumer preference patterns in their core product categories had shifted significantly across that period. The model was optimising for a customer distribution that no longer existed in the same form. Accuracy on the historical test set was strong. Accuracy on the actual 2023 customer population was materially worse. The training data was not wrong — it was accurate for the period it covered. The problem was that nobody had tested whether that period was still representative.

Missing context at inference time. Features that are present during model training because they are drawn from historical records — where the outcome is already known — but unavailable at the point of decision in production. A FinTech company in Bellevue built a fraud detection model trained on historical transaction data. The dataset included the final resolution status of each transaction, a field populated days after the transaction occurred. The model learned to use outcome signals that only exist in retrospect. In production, those fields were empty at the point of decision. The model’s production accuracy was not calculably worse than its training accuracy — it was a different number entirely, because the model being evaluated in production was not functionally the same model that had been trained.

The apidots.com data audit sprint is two weeks with four mandatory outputs: a data quality report covering completeness, consistency, and representativeness relative to the expected production population; a label audit covering inter-annotator agreement and label criteria documentation where applicable; a feature availability audit confirming every input used during training is genuinely available at inference time; and a distribution analysis comparing the training population to the deployment population. The audit report is the go or no-go gate for model development. Development does not begin before it is complete. For regulated industry clients, the audit also includes a compliance assessment of the data handling requirements—which, for Washington state HealthTech companies, means both HIPAA and the My Health My Data Act. The API DOTS approach to predictive ML in regulated environments documents specific failure patterns from this class of problem across finance and industrial deployments.

The University of Washington’s Paul G. Allen School of Computer Science—which produces more ML engineering graduates than almost any program outside MIT and Stanford—applies the same discipline to research publications: dataset construction and validation precede modeling. Every paper that comes out of the Allen School describes the dataset curation methodology in as much detail as the model architecture. Production ML built for Seattle enterprise environments should follow the same standard.

SOFT CTA 1Has your Seattle ML project stalled at the data stage?The team at apidots.com offers a standalone data audit sprint — two weeks, a written report, and an honest assessment of whether your data can support the model you are trying to build. No commitment to further development required. Book a Data Audit Sprint

There is a moment in most Seattle ML vendor engagements where something technically impressive happens. The model trains. The validation metrics look strong. The vendor schedules a demo. A Streamlit dashboard or a Jupyter notebook renders the predictions in a format a non-technical stakeholder can read. The CTO watches the demo and believes the project is close to done.

It is not close to done. In many cases, it is several months and $60,000 away from done — because the model that was just demonstrated was built for the demo environment, not for the production environment it actually needs to run in.

This is the third decision that is made badly in the first two weeks of a Seattle ML project: the deployment target. Generalist ML vendors default to demo targets because demo targets are fast to build, easy to show, and produce the positive stakeholder reaction that justifies the engagement. A Flask application or a Streamlit dashboard can be assembled in a weekend. A production ML serving infrastructure on AWS SageMaker or Azure ML, integrated with existing IAM roles and VPC configurations, compliance-ready audit logging, sub-100ms response time SLAs, and automated retraining pipelines, takes significantly longer to design and build — which is exactly why it needs to be specified at the start of an engagement, not retrofitted after the demo is approved.

Seattle makes this failure more expensive than it would be in any other US market for a specific reason. Both Amazon and Microsoft are headquartered here. The engineers who built SageMaker’s managed inference endpoints and Azure ML’s real-time serving infrastructure are working in the same city as the buyers evaluating ML systems. When a Seattle CTO at an AWS-native company evaluates a production ML deployment, they are implicitly comparing it against the infrastructure quality they see running inside Amazon. A model served from a single EC2 instance with no load balancing, no model monitoring, no drift detection, and no integration with the buyer’s existing security group configuration is not a production system by Seattle enterprise standards. It is a proof of concept with a misleading name.

For Seattle HealthTech and FinTech companies, the failure moves from technically problematic to legally significant. Washington state’s My Health MY Data Act applies to consumer health data collected outside traditional clinical settings — wellness applications, fitness trackers, mental health platforms, symptom checkers. The Act is stricter than federal HIPAA in specific ways that matter to ML deployment: it requires explicit consent mechanisms, specific data deletion rights, and handling protocols that must be built into the data pipeline and serving infrastructure from the beginning. This is materially different from California’s CCPA and substantially different from federal HIPAA. An ML system deployed in an infrastructure that does not satisfy the My Health MY Data Act is not a production system for a Washington state HealthTech company — it is a regulatory exposure. The API DOTS healthcare ML practice documents the specific compliance architecture this requires.

The cost of retrofitting a deployment that was built for a demo is documented. A South Lake Union FinTech company discovered six months after their ML vendor delivered a “production” credit risk model that the model’s decision logging did not meet Washington state’s automated decision-making documentation requirements under the Consumer Protection Act. The adverse action records the model generated did not include the feature attribution data the state requires for consumer notification. Retrofitting the logging infrastructure cost four months and $65,000. The original vendor had built a system that worked. It had never been built for the environment it was legally required to operate in. The API DOTS FinTech development practice and cloud services architecture addresses this class of compliance requirement as a design input, not a post-launch audit finding.

The deployment architecture at apidots.com is specified in the discovery sprint output document, alongside and at the same time as the problem statement and data audit plan. The two are not designed separately because a model cannot be built correctly for an environment that has not been defined. Every apidots.com ML serving environment includes automated retraining pipelines, confidence monitoring, distribution shift detection, and compliance-ready logging as standard — not optional extras. The companies presenting production ML systems at AI Con USA in Seattle in June 2026 were not presenting Flask apps. They were presenting systems with uptime dashboards, drift monitoring graphs, and retraining audit logs. Many ML developers in Seattle focus heavily on model building but overlook data readiness.

The companies that successfully deploy production ML in Seattle share a characteristic that has nothing to do with budget, team size, or the vendor they eventually chose. They insist on specificity before they allow any development to begin. This is not a personality trait. It is a process discipline that the most successful ML buyers in Seattle have adopted, consciously or not, from the culture of the dominant companies around them. Amazon’s internal engineering culture operates on the six-pager: a written document that precisely defines the problem, the customer, the constraints, and the success criteria before any engineering resources are committed. Product teams at Amazon write the press release before they write the specification. They define what done looks like before they start. The companies that have worked closely with or inside the Amazon ecosystem — in South Lake Union, in Bellevue, in the AWS partner network — have absorbed this discipline.

Applied to ML development, it produces a specific pattern. The buyer arrives at the first vendor conversation with a written problem statement, not a verbal direction. They have already considered what data they have and what gaps might exist. They have a view on the deployment environment and its constraints. They are not expecting the vendor to invent the project from scratch — they are expecting the vendor to stress-test and refine a specification they have already begun.This is where experienced ML development companies in Seattle separate themselves from generalist software vendors.

The contrast with the failure scenarios above is direct. A Kirkland-based logistics company operating on Azure came to an apidots.com engagement with a written problem statement that had already been reviewed and signed off by their CTO and operations director. The data audit took nine days because their operational data was well-governed, consistently labelled, and had been documented by their data engineering team before the engagement began. The deployment environment was Azure ML on AKS with existing IAM integration already mapped. The model went to production in eleven weeks. It is still running fourteen months later, with no manual retraining intervention required, because the monitoring pipeline that was built in week one continues to catch distribution shifts before they affect prediction quality. The difference was not the algorithm. It was not even the vendor. It was the quality of the decisions made before the first development sprint began. The API DOTS blog on ML in SaaS environments documents the architectural patterns that make this kind of sustained production performance possible.

API DOTS is a specialist ML and AI development firm. The engineers on every engagement have built production ML systems that are live, monitored, and generating measurable business outcomes. The reason the three failure patterns described above appear in almost every failed Seattle ML project that arrives after a previous engagement is not that the previous vendor was incompetent. It is that a development culture that prioritises starting over defining will always produce these outcomes. They are not accidents. They are the natural result of optimising for speed of development rather than probability of production success.

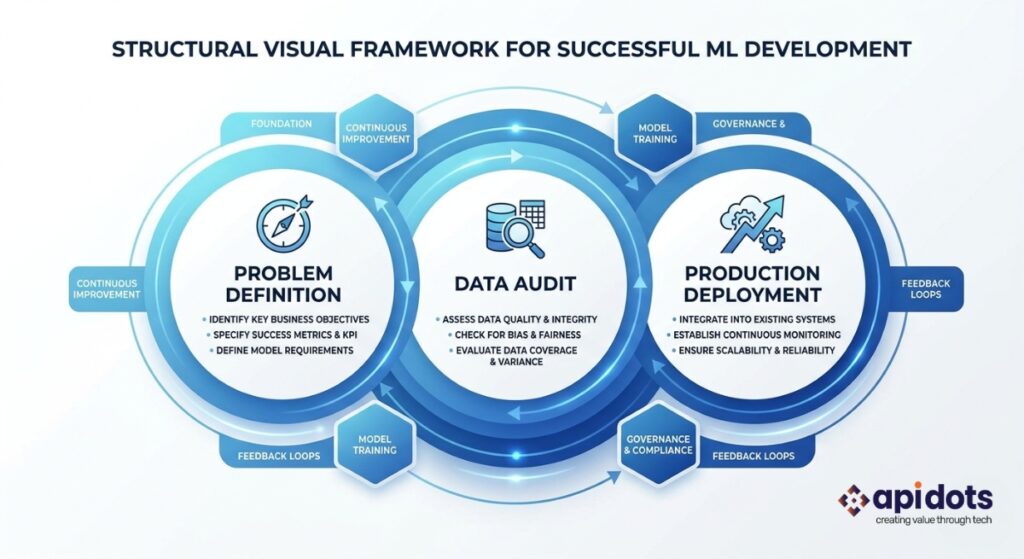

The three decisions apidots.com makes deliberately at the start of every engagement are structured to interrupt that pattern.

The first decision: a model architecture will not be proposed before a five-element problem statement has been written with the client’s product lead and signed off by both parties. This takes three to five days. It is not a formality. It is the foundation on which everything else is built, and it is the step that most competitors skip because it delays the start of billable development work.

The second decision: model development will not begin before a data audit has produced written findings on completeness, consistency, representativeness, and feature availability at inference time. The audit report includes a go or no-go recommendation on the proposed ML approach given the data reality. If the data does not support the problem as defined, that answer is given before development budget is committed — not discovered at model evaluation time.

The third decision: the deployment architecture is specified in week one and the model is built for that environment from the beginning. There is no demo target. There is no “we’ll figure out production deployment later.” The IAM configuration, the compliance requirements, the uptime SLA, the monitoring infrastructure — these are defined alongside the model architecture, not after it. For the complete picture of how every engagement stage is structured from problem definition through post-deployment monitoring, the full ML development guide and the API DOTS AI and ML development practice overview document both the methodology and the rationale.

These three decisions add two to three weeks to the front of an engagement. They prevent failure patterns that cost three to six months and $80,000 to $150,000 to recover from. The full API DOTS services structure is built around this principle: the discovery sprint is not a sales exercise. It is the most important technical work of the entire project. For Seattle and Pacific Northwest companies that have already experienced a failed ML engagement, the recovery audit follows the same three-decision structure — starting with an honest answer about what the previous engagement produced and whether it can be salvaged.

The scale of what is at stake for Seattle companies that get these decisions right — and wrong — is documented in market research that is worth reading before any ML development budget is committed.

Grand View Research projects the global ML market to grow from $65.28 billion in 2026 to $432.63 billion by 2034, at a compound annual growth rate of 26.7%. For Seattle companies, this is not an abstract global number. It describes the compounding capability advantage that companies building production ML now will hold over competitors who spend 2026 and 2027 in failed pilots. A company that ships a production demand forecasting model this year is accumulating training data, monitoring data, and model improvement cycles that a competitor who delays cannot recover in a straight line. The compounding effect of production ML deployment is real and it starts on the day of go-live, not on the day the notebook was finished.

Gartner’s research on enterprise AI maturity shows that 73% of enterprise ML projects never reach production. This statistic is not abstract for Seattle. With 2,000 active AI startups and 1,530 open ML engineering roles, Seattle has the talent and infrastructure advantage over every US city except perhaps San Francisco. The infrastructure does not explain the failure rate. The execution gap does — and the execution gap is almost entirely explained by the three failure patterns this blog describes. Problem statements that cannot be built. Data that was never audited. Deployment targets that were optimised for the demo rather than the production environment.

McKinsey’s State of AI research consistently shows that organisations deploying ML at scale report 20 to 30% reductions in operational costs alongside comparable improvements in decision quality. For Seattle’s dominant industries — logistics moving through the Port of Seattle, HealthTech at Fred Hutch and UW Medicine, enterprise software in Bellevue and Redmond, aerospace ML for Boeing’s supplier network in Renton and Everett — those percentages represent tens of millions of dollars annually per company at scale. The difference between achieving those outcomes and not is whether the ML project reaches production. Most do not. The three decisions described in this blog determine which side of that line a given project lands on.

If you are evaluating ML development partners in Seattle, the right question is not which vendor has the best case studies.It is which vendor will spend the first two weeks making the three decisions that determine whether your project succeeds. The team at apidots.com does. Whether you are in South Lake Union, Bellevue, Kirkland, or anywhere across Washington state, that is where we start. Contact API DOTS for a Free Consultation

How do I choose the right ML development company in Seattle?

Choosing the right ML development company in Seattle comes down to how they handle the first phase of your project.

Look for a team that:

If a vendor jumps straight into model building without these steps, the risk of failure increases significantly.

Why do most machine learning projects in Seattle fail early?

Most machine learning projects in Seattle fail before development even begins.

The main reasons are:

It’s rarely a technical issue — it’s a decision-making issue in the early stages.

How does Seattle’s relationship with AWS and Azure affect which ML development company I should choose?

Both AWS SageMaker and Azure Machine Learning were built in Seattle by engineering teams still based here. Seattle enterprise buyers running on AWS or Azure evaluate ML vendors against the infrastructure quality they see inside Amazon and Microsoft. An ML vendor that cannot deploy natively on SageMaker Pipelines, Azure ML managed endpoints, or Kubernetes on EKS or AKS — with IAM integration, compliance-ready logging, and monitoring pipelines — is not a production ML company in the Seattle context. The test is specific: ask for an architecture diagram from a previous production deployment. Ask which exact services were used. Ask how the IAM configuration was structured. A production ML company can answer all three questions immediately and in detail. A notebook company cannot.

What does a data audit include in ML development?

A proper data audit evaluates whether your data can actually support the model you want to build.

It typically includes:

Without this step, even high-performing models can fail in production.

Can a failed ML project be recovered?

Yes — but it depends on the root cause.

Recovery is possible if:

In many cases, a short technical audit can determine whether to fix, rebuild, or restart.

We engineer and integrate custom ML software solutions end-to-end. Delivering predictive models, data insights, and measurable business results.

Get ML Development Services

I’m a digital marketer with experience in SEO, content strategy, and online brand growth. I specialize in creating optimized content, improving website rankings, building high-quality backlinks, and driving traffic through effective digital marketing strategies. I enjoy helping businesses strengthen their online presence and turn visitors into customers.