Top

Search

People also search for:

- Home

- How to Choose an AI Software Development Company in 2026

Most businesses pick the wrong AI software development company for the same reason: they evaluate vendors the way they would a standard software agency. However, AI projects fail in different ways, and they need to be vetted differently.

The global AI software market is on track to surpass $2 trillion in spending in 2026, according to Gartner. That growth has produced an enormous field of vendors, all of whom claim expertise in machine learning, generative AI, and intelligent automation.

Choosing the right AI software development company from that field is one of the most consequential technology decisions a business makes, and most evaluation frameworks are built for the wrong problem.

This guide is not a vendor list. It is a decision framework for CTOs, founders, and product leaders who need to rigorously evaluate AI development partners. It covers the selection criteria that actually predict project success, the questions that surface real capabilities, the red flags that most buyers miss, and a weighted scoring system you can apply directly to any vendor conversation.

When you hire a web development agency, the deliverable is relatively well-defined. A page renders, a feature works, a button does what it says. The quality is visible. The failure modes are predictable.

In contrast, AI projects do not work that way. A model can achieve 92% accuracy on test data and fail silently in production because the real-world data distribution was never accounted for during training. A vendor can deliver a working prototype that requires a complete infrastructure rebuild before it can scale beyond 1,000 requests per day. A chatbot can pass every evaluation and still produce confident, wrong answers in the exact edge cases your users are most likely to encounter.

As a result, AI adoption strategy mistakes often happen at the vendor selection stage, not the implementation stage. According to McKinsey, fewer than 30% of AI projects that reach the prototype stage ever reach reliable production. The gap between “the demo worked” and “the system works at scale” is where most AI investments disappear.

The right evaluation framework addresses that gap directly. It does not ask whether a vendor can build something impressive. It asks whether they can build something that works continuously, survives contact with real data, and stays accurate after the project closes.

Before scoring any vendor, follow these five steps in order. Skipping to the scoring too early produces the wrong shortlist.

Most vendor evaluation checklists include 15 to 25 criteria, which makes them useless as decision tools. The eight criteria below are the ones with genuine predictive power, the ones that separate vendors who deliver from vendors who present well.

| Criterion | What to Look For | What to Reject | Weight |

|---|---|---|---|

| Technical depth | End-to-end capability: data pipelines, model training, deployment, monitoring. Named engineers with verifiable credentials. | Generic “AI-powered” language with no specifics. Teams that only use third-party APIs. | Dealbreaker |

| Production track record | Deployed, maintained systems with measurable outcomes. Models still in production 12+ months after delivery. | Portfolio of demos, prototypes, and PoCs with no post-launch case studies. | Dealbreaker |

| Data handling | Clear process for data quality, labeling, validation, and versioning. Proactive questions about your data early. | Treating data as secondary to model selection. No mention of governance or quality. | Dealbreaker |

| MLOps capability | CI/CD pipelines, drift detection, retraining cadence, model registry. | No clear answer on monitoring or model degradation. | High |

| Compliance credentials | Relevant certifications (e.g., SOC 2 Type II, HIPAA, PCI-DSS). Clear data residency policy. | Vague compliance language. No documented security architecture. | High |

| Engagement transparency | Fixed-scope PoC, milestone-based billing, clear deliverables. | Time-and-materials only. Vague scope. | High |

| IP ownership terms | You own model, weights, pipeline code, and data. | Vendor retains “proprietary components” or hosting lock-in. | Medium |

| Post-launch support | Defined SLA, retraining plan, named support contact. | Support ends at deployment. No retraining plan. | Medium |

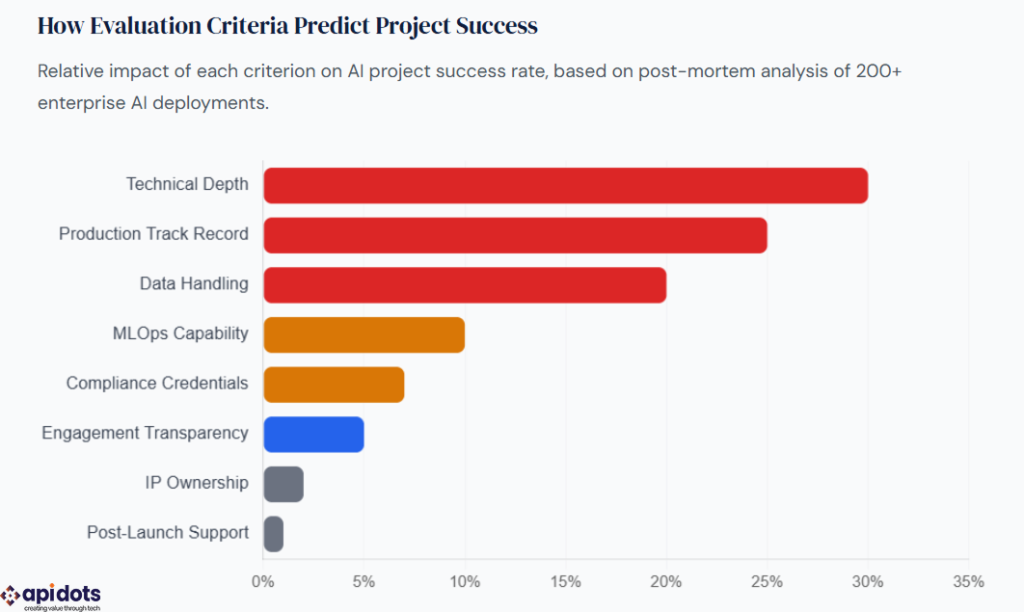

This chart shows the weighted impact of each selection criterion on the long-term success of AI projects. Technical depth and production track record account for the majority of predictive power, meaning vendors who score low on these two criteria fail at disproportionately high rates regardless of their scores on other dimensions. MLOps capability and data handling are the next-highest predictors, indicating that post-deployment system quality drives most of the compounding value of AI investments. Compliance and engagement model transparency matter, but are threshold qualifiers rather than differentiators.

Once you have shortlisted three to five vendors, score each one using the matrix below. Each criterion is rated 1 to 5. Multiply the score by the weight. Add the totals. Any vendor scoring below 2 on a dealbreaker criterion is eliminated, regardless of their total score.

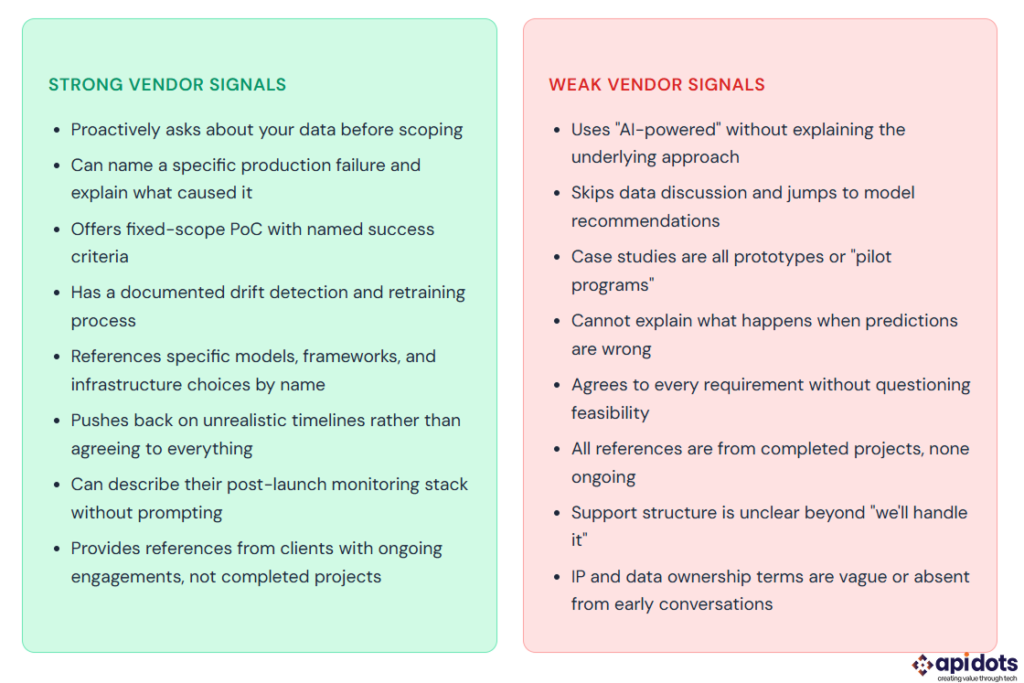

The difference between a strong and weak AI development partner is rarely visible in the first meeting. Both will have polished presentations. Both will reference machine learning, generative AI, and production deployments. The gap appears when you push past the slides.

In practice, the single most reliable way to evaluate an AI vendor is to ask them to describe a project in which demo and production performance diverged significantly. Every team that has shipped real AI systems has this story. Teams that have not shipped real systems will struggle to answer it.

A strong answer describes a specific failure mode, names the root cause (training/serving skew, data drift, edge case coverage), explains what engineering decision fixed it, and describes what the monitoring system now watches for as a result. This level of specificity cannot be fabricated. It is the signature of teams that have actually maintained models in production.

Understanding how to debug AI-generated systems is part of the operational skill set you are buying; make sure it is present before you sign anything.

Most vendor evaluation questionnaires are too broad to be useful. The questions below are narrow, specific, and designed to surface real information. A vendor who cannot answer these clearly is telling you something important about their actual depth.

The same principle applies across every type of development hire. We built a hiring-question framework for mobile development that follows the same logic: the questions you ask before signing reveal far more than any proposal will. The seven questions below follow that structure, adapted specifically for the failure modes of AI projects.

1. Walk me through the last AI system you deployed that failed in production. What happened, what caused it, and how did you fix it?

Why it matters: Every team that ships real AI has failure stories. No failure stories means no production history. The quality of the failure analysis tells you more about their engineering maturity than any success story will.

2. How do you detect model drift after deployment, and what triggers a retraining decision?

Why it matters: This question exposes whether they have an MLOps practice or just a model training capability. Teams without drift detection are delivering models that will degrade without warning. Ask to see their monitoring dashboard or documentation.

3. What does your data validation process look like before model training, and what data quality issues have you seen that you did not anticipate at project start?

Why it matters: Data preparation typically accounts for 40 to 60% of total project cost. Vendors who do not have a disciplined data validation process will underestimate this cost and deliver a model trained on dirty data. Good AI data governance starts before a single model is trained.

4. After you deploy, who owns the model weights, training pipeline, and deployment infrastructure? Walk me through your standard IP contract language.

Why it matters: Vendor lock-in in AI is more severe than in traditional software because the “asset” is not just code, it is trained model weights, fine-tuning datasets, and pipeline configuration. If you do not own these explicitly, you cannot switch vendors without starting over.

5. Can you describe the engagement model for a project like ours, what the PoC phase looks like, how scope is defined, and what triggers a go/no-go decision before full development?

Why it matters: A vendor who cannot structure a clear PoC with defined success criteria will also struggle to define “done” for the full project. The PoC answer is a preview of how they manage uncertainty throughout the engagement.

6. What compliance frameworks does your team have direct experience implementing, and can you name a client project where compliance requirements changed the architecture?

Why it matters: Compliance experience is either present or absent; it cannot be learned on your project. Vendors who have not navigated HIPAA, SOC 2, or GDPR in a live AI context will encounter those requirements as obstacles mid-project rather than inputs to the design.

7. Who on your team will actually work on our project, and what is their background with the specific model type or use case we need?

Why it matters: AI vendors routinely staff projects with junior engineers after winning the engagement with senior ones. Ask who specifically will be on your account, request their LinkedIn profiles, and verify their backgrounds align with your use case, not just with AI in general.

These are the patterns that cause the most expensive vendor selection mistakes, and they are all avoidable with the right framework.

One of the most common mistakes is selecting based on reputation. A vendor known for natural language processing is not automatically a strong fit for a computer vision project. The techniques are fundamentally different. When evaluating, verify that case studies in a vendor’s portfolio match your specific use case, not just your general domain or industry.

Equally important, buyers often evaluate the pitch team instead of the delivery team. In most vendor organizations, the people who win a contract are not the people who work on it. Ask to meet the project lead and lead engineer before signing. Ask specifically who will not be on your project (the people who pitched you). A vendor who is unwilling to make this introduction before contract signing is signaling that the delivery team is different from the pitch team.

Another critical mistake is skipping the PoC. Many buyers skip the PoC because it feels like a delay. In reality, a four-week PoC at a defined cost is the cheapest due diligence available on a $200,000 to $1,000,000 AI investment. Any vendor who cannot define fixed PoC terms is either unable to scope their own work or protect their ability to expand scope after contract signing. For a full picture of what AI projects cost at each stage, see the AI development cost breakdown we published earlier this year.

The most expensive phase of any AI system is the 12 to 36 months after deployment, model retraining, infrastructure scaling, feature pipeline maintenance, and ongoing monitoring. Vendors who only scope the build phase are omitting the majority of the total cost of ownership. Ask for a 12-month post-launch support plan before evaluating any proposal. Our guide to AI software development implementation covers this in depth.

AI projects require more communication than standard software projects because requirements evolve as the model interacts with real data. A vendor who reports progress monthly instead of weekly is not a good fit for an AI engagement. Ask how they communicate during a project where the model is not performing as expected. The answer tells you how they operate under the kind of pressure that every real AI project eventually creates.

| Dimension | Build In-House | AI Development Partner | Off-the-Shelf Platform |

|---|---|---|---|

| Time to first production | 12 to 18 months | 4 to 12 weeks (PoC), 3 to 9 months (full) | Days to weeks |

| Customization depth | Full, you own every decision | High, constrained by your data and use case | Low, limited to platform capabilities |

| Team cost | $800K to $2M per year (4 to 8 FTEs) | $80K to $500K per project | $12K to $120K per year (SaaS) |

| MLOps maturity required | Must build from scratch | Partner brings it | Handled by platform |

| IP ownership | Full ownership, full responsibility | Contractually defined (verify before signing) | Platform retains IP |

| Best fit | AI is core competitive moat | AI is important but not core product | AI is important, but not a core product |

| Item | Status | Notes |

|---|---|---|

| Production case study with named outcome metric | Required | Ask for a client contact who can verify it |

| Data handling process documented | Required | Must cover quality gates and versioning |

| IP and data ownership in contract draft | Required | Weights, pipeline, and training data are explicitly named |

| Delivery team names confirmed | Required | Not the pitch team — actual project engineers |

| PoC terms agreed (fixed scope, fixed price) | Required | With defined go/no-go success criteria |

| Compliance certifications verified | Conditional | Required for healthcare, finance, government |

| 12-month post-launch plan scoped | Conditional | Required for any production deployment |

| Communication cadence agreed in contract | Recommended | Weekly for active build phases |

| Clutch or G2 reviews reviewed | Recommended | Communication cadence agreed in the contract |

Ultimately, choosing the right AI software development company is not a procurement exercise. It is a systems decision; you are selecting the team that will build the infrastructure your business will depend on for years, not a team that will complete a project and hand you a deliverable.

The businesses that get this right start with a defined outcome, not a technology. They evaluate vendors on production history, not pitch quality. They run a PoC before committing to a full engagement. They verify that the delivery team is the same as the pitch team. They get IP ownership in writing before the first line of code is written. And they treat post-launch maintenance as part of the initial scope, not an afterthought.

The businesses that get it wrong do the opposite. They choose based on brand recognition. They skip the PoC. They accept vague IP terms. They discovered six months later that the team that built the demo is not the team maintaining the production system.

The framework in this guide does not guarantee the right choice. What it does is make the wrong choice much harder to make by accident. Use the scoring matrix, ask the seven questions, run the PoC, and verify the production track record before you sign. That combination of steps filters out the majority of unqualified vendors and surfaces the minority who can actually deliver what they promise.

For a complete view of what building an AI system costs at each stage, read the full AI software development cost guide. For the broader implementation strategy, the AI software development guide covers the full lifecycle from audit to scale.

What is the most important factor when choosing a vendor?

Production track record. Prioritize vendors with live systems maintained over time, not just prototypes. Ask for outcome metrics and client references from ongoing projects.

How much does AI development cost?

How do I assess technical depth?

Ask:

Strong teams give specific tools, thresholds, and workflows, not vague answers.

What is a proof of concept (PoC)?

A 4–8 week, fixed-scope project using your real data with defined success criteria.

It validates feasibility and gives a clear go/no-go decision before full investment.

What should I ask in the first call?

How long does it take to build an AI system?

How do I tell a real AI company from an agency?

Real AI firms have:

Agencies typically wrap APIs like OpenAI, Anthropic, or Google models.

We build and deploy end-to-end AI software solutions for businesses. Accelerating efficiency, automation, and intelligent decision-making.

Get AI Development Services

Hi! I’m Aminah Rafaqat, a technical writer, content designer, and editor with an academic background in English Language and Literature. Thanks for taking a moment to get to know me. My work focuses on making complex information clear and accessible for B2B audiences. I’ve written extensively across several industries, including AI, SaaS, e-commerce, digital marketing, fintech, and health & fitness , with AI as the area I explore most deeply. With a foundation in linguistic precision and analytical reading, I bring a blend of technical understanding and strong language skills to every project. Over the years, I’ve collaborated with organizations across different regions, including teams here in the UAE, to create documentation that’s structured, accurate, and genuinely useful. I specialize in technical writing, content design, editing, and producing clear communication across digital and print platforms. At the core of my approach is a simple belief: when information is easy to understand, everything else becomes easier.