Top

Search

People also search for:

- Home

- Machine Learning Development in California: Salaries, Vetting & In-House vs Agency (2026)

Here is what most hiring guides for ML developers in California will not tell you. The market is not short of people who call themselves machine learning engineers. It is short of people who have actually deployed ML models to production, maintained them over time, watched them drift, diagnosed the failure, and fixed it under pressure. That specific capability is what separates a $150,000 ML hire from a $300,000 one in San Francisco — and it is almost impossible to identify from a resume alone.

Global AI talent demand currently exceeds supply by 3.2 to 1. There are over 1.6 million open ML and AI roles worldwide with only 518,000 qualified candidates available to fill them. In California, that imbalance is amplified: 6,827 ML jobs are open in the state as of Q2 2026, and companies including OpenAI, Google DeepMind, Databricks, Anthropic, and hundreds of well-funded startups are all competing in the same talent pool. Average time-to-fill for senior ML roles in financial services and healthcare has reached six to seven months.

This guide is built for California hiring managers, CTOs, and founders who need honest, data-backed answers to the questions that matter: what the real salary ranges are across San Francisco, Los Angeles, and San Jose; what skills to screen for and which are being wildly overstated on resumes; how to structure a vetting process that actually finds production-capable engineers; and when it makes more sense to partner with a specialist ML development firm than to hire at all.

For the full technical context, read the complete resource: Machine Learning Software Development Guide.

California leads every US state in ML job volume, ML salary levels, and ML company density. Understanding the specific mechanics of this market — not just the headline numbers — is what allows you to hire smarter rather than just faster.

LinkedIn data shows that demand for ML engineers grew 344% over the four years from 2020 to 2024. University programmes, bootcamps, and online courses have all expanded their ML curricula significantly in that period, but the production-capability gap has not closed. As one California-based ML staffing specialist noted: when screening candidates for senior roles, roughly 60% of resumes that arrive describe notebook-only experience. The candidate trained a model, achieved good metrics on a test set, and perhaps wrote a paper about it. That is not what California companies are paying $220,000 to hire.

The distinction matters at every level of the hiring process. An engineer who has only worked in Jupyter notebooks has not encountered model drift, production latency requirements, data pipeline failures, or the operational complexity of serving predictions to thousands of concurrent users. These are not edge cases in production ML — they are the daily reality of maintaining a live system.

3.2:1 Global ML talent demand-to-supply ratio in 2026. 1.6M open roles, only 518K qualified candidates available worldwide. (Source: Second Talent / World Economic Forum data)

The ML engineering skillset required in California in 2026 differs substantially from what was expected in 2023. Three capabilities in particular have shifted from advanced differentiators to baseline expectations for senior roles:

A 2026 survey of technology companies found that 96.4% cite the lack of candidates with the necessary skills as their primary challenge in technical hiring. This is not a California-specific problem — it is a structural market condition driven by the speed of AI adoption outpacing both academic training and corporate upskilling. McKinsey research shows that while AI adoption has increased from 78% to 88% year-over-year in business functions, only about one-third of organisations report having begun scaling ML programmes across the organisation. The adoption is happening faster than the production capability is being built.

Also Read: AI and Machine Learning in SaaS Applications

Most job descriptions for ML roles in California are written by people who have read about machine learning rather than hired for it. They list frameworks, programming languages, and academic credentials as primary requirements. The engineers who cause the most expensive failures in California ML projects usually have all of those things on their resumes.

Here is the skills framework that the team at apidots.com uses when evaluating ML candidates and when scoping client projects. It is ordered by what actually predicts production success, not by what looks impressive on a CV.

Python proficiency is table stakes — but the quality of Python matters more than the fact of it. Production ML code needs to be maintainable, testable, and reviewable by engineers who were not involved in writing it. Ask to see code written for production, not for a hackathon or a university project. Code quality is one of the most reliable signals of production experience.

Beyond Python, evaluate depth rather than breadth in ML frameworks. An engineer who knows PyTorch deeply — who understands what is happening in the computational graph, who can debug a training instability, who can optimise memory usage for a specific GPU configuration — is substantially more valuable than one who has a passing familiarity with six different frameworks. Framework-hopping is a signal of breadth-over-depth learning patterns, which is common in engineers who have learned through courses rather than production work.

Statistical foundations remain critically important despite being frequently undervalued in California hiring. An engineer who cannot explain the difference between precision and recall in plain language, or who cannot reason about class imbalance without being prompted, has gaps that will surface at the worst possible time — typically when a stakeholder asks why the model is producing unexpected outputs in production.

Natural language processing has become the dominant ML discipline in California hiring. The emergence of large language model deployment as a standard engineering problem — rather than a frontier research problem — means NLP skills are now assessed at every level of the ML engineering stack. What was once a specialisation is now a baseline expectation for senior California roles.

Computer vision remains a critical discipline specifically in California’s autonomous systems, manufacturing quality assurance, and healthcare imaging sectors. San Jose and Los Angeles are particularly active markets for computer vision engineers because of the concentration of semiconductor, medtech, and robotics companies in those cities. The team at apidots.com has built production computer vision systems for clients in all three of these sectors, and the technical requirements differ substantially between them — which is why sector experience matters as much as technical skill.

MLOps is where most California ML projects succeed or fail. The engineers who can build model serving infrastructure that handles variable load, maintain prediction latency under p99 requirements, implement A/B testing for model versions, and build automated retraining pipelines triggered by detected drift are the engineers that California companies are paying the highest premiums to hire. This is not coincidence — it reflects what companies have learned from the experience of deploying ML systems that degraded silently over time. For a detailed breakdown of how MLOps fits into the full ML development lifecycle, read: Machine Learning Software Development.

California ML engineering in 2026 is an interdisciplinary, collaborative practice. The engineers who deliver the most value are not those who can train the most accurate model in isolation — they are those who can translate a business problem into an ML problem, explain their model’s behaviour and failure modes to a non-technical stakeholder, and work iteratively with product and data teams to refine the problem statement as they learn from real data.

This sounds obvious when stated directly, but it is systematically under-evaluated in California ML hiring processes. Most technical interviews focus heavily on algorithm knowledge and coding exercises, and almost entirely ignore the candidate’s ability to communicate uncertainty, push back on an underspecified problem, or explain why a simpler approach might be more appropriate than the complex one the interviewer was expecting.

API Dots builds every client engagement around the principle that the most important skill in ML development is the ability to define the right problem before proposing a solution. It is the same principle we apply when evaluating the engineers on our own team.

These figures are drawn from Q1 2026 salary data across Glassdoor, Built In, Indeed, and specialist ML recruitment firm data. They represent actual offer data, not inflated self-reported survey figures.

The Bay Area is the highest-paying ML market in the world, not just in the United States. Glassdoor’s Q1 2026 data for San Francisco shows an average ML engineer salary of $222,203, representing 39% above the national average of $131,000. The typical offer range for experienced engineers sits between $181,716 and $276,815, with top earners exceeding $334,930. For Lead and Principal ML roles, ML specialist recruitment firms report base salaries reaching $355,000, with total compensation packages at FAANG-level companies regularly ranging from $320,000 to $550,000 when equity and bonuses are included.

The Bay Area premium reflects genuine market conditions: you are competing with OpenAI (valued at $852 billion as of early 2026 and actively expanding its office footprint to over one million square feet in San Francisco), Anthropic, Google DeepMind, Meta AI, Databricks, Scale AI, and thousands of funded startups for every qualified ML engineer who enters the market. When an engineer receives four competing offers within a week of becoming available, compensation benchmarks rise accordingly.

Southern California has a distinct ML salary structure. Mid-to-senior ML engineer roles in Los Angeles, Orange County, and Irvine typically range from $165,000 to $194,960 in base salary. The Los Angeles market is particularly strong in entertainment technology and media AI, streaming recommendation systems, and autonomous vehicle software (with Toyota Research Institute and several autonomous vehicle startups anchored in the region). HealthTech and BioTech ML roles in San Diego command a premium at the senior level due to the concentration of pharmaceutical and genomics companies in that market.

San Jose and the broader Silicon Valley corridor track closely with San Francisco in ML compensation. The semiconductor industry’s deep investment in AI hardware has driven demand for ML engineers with hardware-awareness — specifically those who understand GPU memory constraints, inference optimisation, and edge deployment. Intel, Nvidia, and a constellation of chip design startups pay Bay Area salaries for ML engineers who can work at the hardware-software interface, which is a specialisation that commands premiums even in an already-premium market.

Base salary is only the starting point. Total compensation for senior California ML engineers typically includes equity (often representing 20 to 40% of total package value), annual bonuses of 10 to 20% of base, and a benefits package including health insurance, 401K matching, and professional development budget that adds a further 15 to 25% of base salary in employer cost. The fully loaded annual cost of a senior ML hire in San Francisco regularly exceeds $300,000 and can approach $400,000 for principal-level engineers with deep MLOps and LLM expertise.

There is also the cost of time. Average time-to-fill for senior ML roles in California’s financial services and healthcare sectors has reached six to seven months. During that time, the ML project does not pause — it either stalls, is handed to engineers without the right skills, or forces other team members to absorb the gap. The opportunity cost of a seven-month hiring cycle for a business with time-sensitive ML initiatives is rarely factored into hiring budget conversations, but it should be.

This decision has a larger impact on your ML project timeline and budget than any architectural choice you will make. Both options are appropriate in the right circumstances. Understanding which is right for your situation requires honest assessment of three variables: timeline pressure, budget flexibility, and the long-term strategic importance of owning ML capability in-house.

Internal ML teams make sense when your ML capability is genuinely proprietary and represents a durable competitive advantage. If your model’s value comes directly from continuous access to your operational data — the way Netflix’s recommendation system relies on real-time viewing behaviour across 280 million users, or the way Airbnb’s pricing model relies on supply, demand, and conversion data that no external partner could access — then the investment in internal hiring is justified.

It also makes sense when you are building an ML platform rather than a single ML application: when the goal is to create internal infrastructure that will support dozens of models across multiple products over time, an in-house team becomes the only scalable approach. Companies like Uber, DoorDash, and Instacart built their ML platforms internally specifically because the volume and variety of ML use cases across their products exceeded what any external partnership model could efficiently support.

For these organisations, the six-month hiring cycle and the $300,000-plus annual cost are justified because the ML team’s output is directly tied to core product value. The cost of not having that team is the product itself falling behind.

For most California SMEs, Series A and B startups, and established businesses adding ML to specific products or workflows, the in-house calculation does not work. A seven-month hiring cycle to fill one senior ML role, followed by a further three to six months of onboarding before that engineer reaches full productivity, means you are twelve to thirteen months from your first production ML output — assuming the hire succeeds on the first attempt, which the data suggests it often does not.

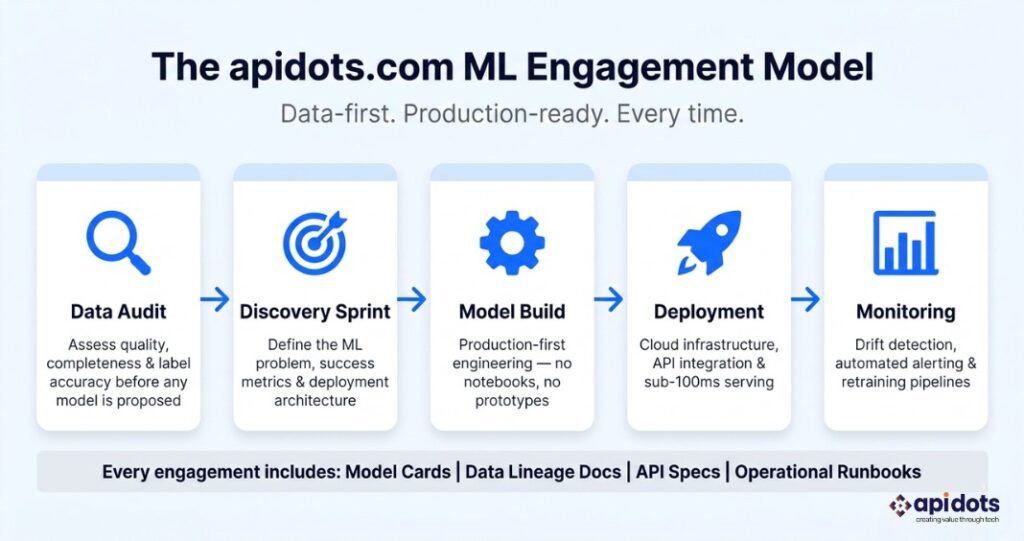

The engineers at apidots.com work with California businesses on a model that addresses this directly: a fixed-scope discovery sprint to understand your data and define the ML problem precisely, followed by a phased delivery process that puts working software in your hands within weeks rather than months. You access a full ML team from day one — data engineers, ML engineers, MLOps specialists, and a technical lead — without the hiring cycle, the benefits overhead, the equity dilution, or the productivity ramp.

The economics are particularly clear for defined-scope ML projects. If you need a fraud detection model, a demand forecasting system, a document classification pipeline, or a recommendation engine, the API Dots delivery model typically results in a production system in 8 to 16 weeks, at a cost that compares favourably to a single year’s fully-loaded salary for one senior ML engineer.

Weighing in-house hiring against a specialist ML partner? The team at apidots.com will give you an honest answer. Book a Free Strategy Call →

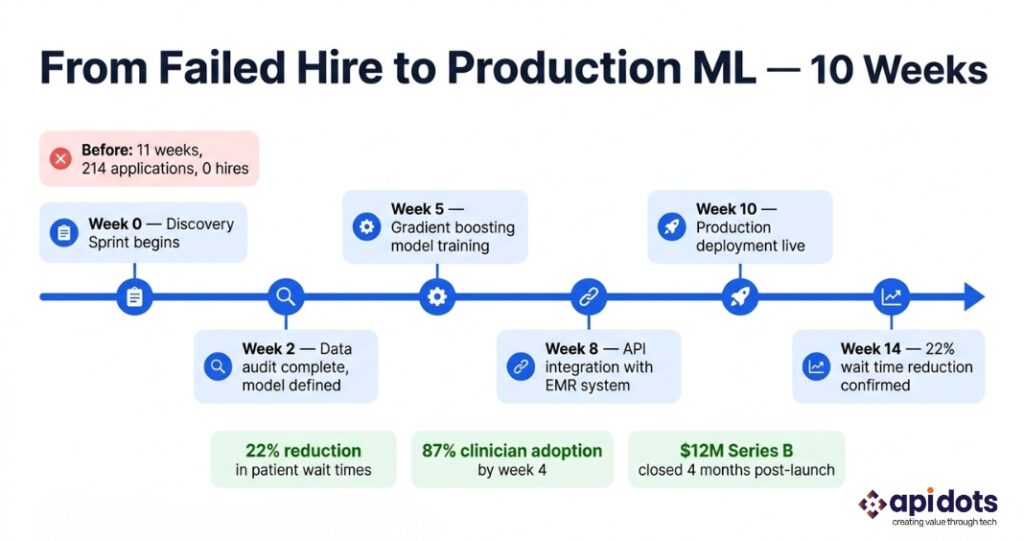

Background: A San Jose-based HealthTech startup developing an AI-assisted patient triage platform for urgent care clinics across California and Nevada. Series A funded at $8.4 million, 28 employees, HIPAA-compliant infrastructure already in place. Their core ML requirement was a risk-scoring model that could prioritise patients in waiting queues based on presenting symptoms, vital signs, and historical visit patterns — reducing the reliance on manual nurse triage for initial queue ordering.

The hiring attempt that failed: Before approaching the team at apidots.com, the company spent 11 weeks attempting to hire a senior ML engineer in San Francisco. They posted the role at $195,000 base salary — competitive, but below what top candidates were receiving from the larger companies also actively hiring in that market. They received 214 applications, conducted 23 phone screens to filter for production ML experience, ran 8 structured technical interviews, and made 2 conditional offers. Both candidates declined in favour of competing offers from companies with stronger brand names and higher total compensation packages. The hiring effort had consumed approximately 340 hours of internal management time and produced no output.

The discovery process: The API Dots discovery sprint ran for two weeks and covered four areas: data structure assessment (evaluating 14 months of historical triage records for completeness, consistency, and labelling quality), problem scoping (defining the model’s input features, output format, and the specific decision the model needed to support), success metrics (agreeing on the business outcome the model needed to achieve, not just the statistical metric it would be evaluated against), and deployment architecture (designing the API contract between the model and the clinic’s existing EMR system from week one, rather than treating it as a post-training problem).

The build: The model architecture chosen was a gradient boosting classifier trained on the cleaned historical triage data. The choice was deliberate: gradient boosting models are interpretable, which matters in a clinical setting where clinicians need to understand and trust the model’s reasoning. A more complex neural network approach was considered and rejected because the interpretability trade-off was not acceptable to the clinical staff who would be using the output.

The model was containerised using Docker and deployed via Google Cloud Platform, integrated with the clinic’s EMR through a REST API with sub-100ms response time. A monitoring pipeline tracked prediction confidence distributions weekly, with automated Slack alerts triggered if the distribution shifted beyond a defined threshold. Model performance was reviewed monthly, with a retraining trigger set based on both performance degradation and new training data volume. The cloud infrastructure was designed to scale horizontally as the client added clinic locations.

The outcome: Production deployment went live at week 10 of the engagement. In pilot clinics, average patient wait time for initial triage assessment decreased by 22% in the first 60 days. Clinician adoption rate reached 87% by week four — significantly above the 60% threshold the team had set as their initial success target. The model identified three patient profile clusters that the previous manual triage process had been systematically under-prioritising for urgent assessment. The startup included quantified results from the first 90 days of production deployment in their Series B pitch deck, which closed at $12 million four months after go-live.

The failure was not the absence of ML capability in California. It was the assumption that hiring one engineer was the right unit of investment for this type of project. What was needed was a team with specialised roles and a delivery process — and that is what the partnership with apidots.com provided.

Also Read: Predictive AI and ML Development for Finance and Manufacturing

This framework is used by the California ML team at apidots.com when evaluating engineers for our own development projects. Every step is designed to surface production experience rather than theoretical knowledge, because theoretical knowledge is what the vast majority of California ML resumes contain.

Before any algorithm question or coding exercise, ask the candidate to describe a specific ML system they have shipped to production. Not a project they worked on — a system they personally deployed, that served real predictions to real users. The questions that follow should be specific: What was the model serving latency at p99? How did you monitor for model drift? What happened the first time the model behaved unexpectedly in production, and what did you do?

Candidates with genuine production experience answer these questions with specific detail, including the uncomfortable parts. Candidates without it answer with generalities or pivot to describing what they would do rather than what they did.

Give the candidate a realistic sample dataset containing missing values, format inconsistencies, duplicate records, and outliers that are not obviously erroneous. Give them 20 minutes and ask them to document their approach to assessing and addressing the data quality issues before any modelling begins.

A production ML engineer’s first instinct is to understand the data generating process — why are these fields missing, what does this outlier pattern mean, is this duplicate a data entry error or a legitimate repeat? A notebook engineer’s first instinct is to write code to drop nulls and start training. The difference in approach is immediately visible and reliably predictive of production performance.

Ask the candidate to describe the failure modes of the best ML model they have built. Not the strengths — the specific, concrete ways in which the model fails, and under what input conditions. Production ML engineers have detailed, specific answers to this question because they have lived through those failures. The ability to describe and reason about failure modes is one of the clearest markers of production experience in California ML hiring.

Present a scenario: your client’s churn prediction model was trained six months ago on customer behavioural data. The underlying customer behaviour has shifted significantly due to a product change, and the model’s performance has degraded. Describe the MLOps architecture you would design to detect this problem automatically and respond to it systematically.

The answer should include: monitoring for input data distribution drift (not just model output metrics), a retraining pipeline triggered by either performance thresholds or data volume accumulation, model versioning and shadow deployment for A/B testing, and a rollback mechanism. Candidates who answer with ‘retrain the model when someone notices it is wrong’ have not operated ML systems at production scale.

Give the candidate a model performance metric — for example, ‘the precision of the fraud detection model improved from 0.78 to 0.84.’ Ask them to explain what that means for the business in plain language, and to identify what additional information they would need to quantify the business impact.

Strong candidates will note that precision improvement means fewer false positives — fewer legitimate transactions declined, fewer customers incorrectly flagged — and will ask about the volume of transactions processed, the cost per false positive in customer experience and support, and whether the recall trade-off is acceptable. Candidates who cannot connect model metrics to business outcomes have been working in research environments, not product environments.

Also Read: AI Powered Software Solutions for Growing Businesses

These patterns are consistently associated with ML hires that underperform expectations in California companies. Recognising them in a hiring process is far less expensive than discovering them six months into a project.

The California ML team at apidots.com works exclusively on production ML and AI systems. We do not build demos, produce proofs of concept that never ship, or deliver model files without deployment infrastructure. Every engagement is scoped around a defined business outcome, and every system we build includes monitoring, alerting, and a documented retraining strategy as standard.

The thing that California clients most frequently tell us differentiates working with API Dots from working with other ML vendors is the discovery process. We have turned down projects where the data quality made the proposed ML approach unworkable. We have recommended simpler approaches — including statistical methods and rule-based systems — when the data did not justify the complexity and cost of a machine learning model. That honesty is not common in the California ML vendor market, and California businesses who have been oversold on ML capability before tend to notice it immediately.

Explore the full scope of ML development services at apidots.com, or read the complete guide to Machine Learning Software Development to understand how the California ML team approaches projects from data strategy through deployment and post-launch optimisation.

Looking for a production ML development partner in California? Talk to the team at apidots.com today. Book a Free Consultation →

What is the average time to hire a senior ML engineer in California?

For senior ML engineering roles, California companies typically spend 45 to 90 days from job posting to offer acceptance under favourable conditions. In the financial services and healthcare sectors, where role requirements are more specialised, average time-to-fill has reached six to seven months. The California market is candidate-driven: qualified engineers receive multiple competing offers within days of becoming available, which means a slow or poorly structured hiring process almost always ends in a declined offer. Partnering with a specialist firm like the team at apidots.com bypasses this timeline entirely for defined project work.

How do I know whether a California ML engineer has genuine production experience?

Ask for a specific production system they have deployed and maintained — not a project they contributed to, but a system they own. Then ask about the monitoring setup, the last time the model’s performance degraded, and what they did. Production engineers answer with specific, sometimes uncomfortable detail. Candidates without production experience answer with generalities or pivot to hypotheticals. The data quality assessment exercise described in the vetting framework section of this guide is also highly reliable — production engineers approach messy data with diagnosis questions, not with code.

What ML specialisations are commanding the highest salaries in California in 2026?

Specialist ML salary data from Q1 2026 shows three areas commanding the largest premiums above the California baseline of $210,770: LLM fine-tuning and RAG architecture (40 to 60% above baseline for senior engineers), MLOps infrastructure including Kubernetes, MLflow, and Vertex AI (25 to 40% above baseline), and domain-specific ML in healthcare imaging, autonomous systems, and financial risk modelling (sector premium of 20 to 35%). FAANG-level total compensation packages for principal engineers in these specialisations regularly reach $400,000 to $550,000. Source: Signify Technology ML Salary Benchmarks 2025-2026.

When does it make more sense to partner with an ML development firm than to hire in-house?

Partnering with a specialist ML firm is the right answer when your timeline is under six months, when your ML project scope is defined enough to specify inputs and expected outputs, when you do not need permanent in-house ML capability after the project ships, or when your budget cannot support the fully-loaded $300,000-plus annual cost of a senior San Francisco ML hire. For most California SMEs and growth-stage companies evaluating their first or second ML system, the specialist firm model delivers a production system in the time it takes to complete a hiring process — with a full team rather than a single engineer.

Can apidots.com work with California businesses that already have a partial ML implementation?

Yes. A significant portion of the work the team at apidots.com takes on in California involves existing ML implementations — models that were trained but never deployed to production, pilot systems that failed to scale, or live models that have degraded in accuracy over time without anyone noticing. We begin every such engagement with a technical audit and an honest assessment of what can be preserved and what needs to be rebuilt. We do not recommend rebuilding systems that do not need rebuilding, and we do not pretend that systems that do need rebuilding can be patched.

We engineer and integrate custom ML software solutions end-to-end. Delivering predictive models, data insights, and measurable business results.

Get ML Development Services

I’m a digital marketer with experience in SEO, content strategy, and online brand growth. I specialize in creating optimized content, improving website rankings, building high-quality backlinks, and driving traffic through effective digital marketing strategies. I enjoy helping businesses strengthen their online presence and turn visitors into customers.