Top

Search

People also search for:

- Home

- Machine Learning Software Development: How to Build, Deploy, and Scale ML Systems

Most companies that invest in machine learning do not fail at the algorithm. They fail at the engineering around it. The model works in a notebook. It performs well in testing. Then it hits production and breaks, degrades silently, or costs three times the budget.

Machine learning software development is the practice of building complete systems that learn from data, make predictions, and improve over time in real production environments. The challenge for any business is not training a model. It is building the surrounding infrastructure: data pipelines, deployment architecture, scaling strategy, and ongoing monitoring that keep that model working reliably after it ships. This is where most projects fail.

As Andrej Karpathy, co-founder of OpenAI, put it at the YC AI Startup School in June 2025:

“Software 1.0 is code humans write. Software 2.0 is neural network weights. The new engineering is not debugging logic. It’s curating datasets, building pipelines, and keeping models honest in production. The hottest new programming language is English.”

According to Gartner, 85% of ML projects fail to reach production not because of bad models, but because of missing systems engineering around them.

This guide covers the full ML lifecycle: system architecture, deployment patterns, MLOps infrastructure, cost structure, and the implementation framework used by high-performing engineering teams. If you are evaluating machine learning for your business, this is the practical guide to moving from idea to a reliable, production-ready ML system.

If you are looking beyond machine learning, you can also explore our complete guide to AI software development for a full overview of AI systems, tools, and implementation strategies.

Machine learning systems behave differently from traditional software because they rely on data instead of fixed rules.

In traditional software, engineers define exact logic using conditions and rules. In machine learning, the system learns patterns from data, which makes behavior probabilistic rather than deterministic. This creates new challenges in how systems are tested, deployed, and maintained.

The biggest difference appears in production.

A model that performs well during development can fail once deployed. It may degrade over time, produce inconsistent results, or become too costly to operate. These failures are often caused by data drift, mismatches between training and production environments, and a lack of monitoring.

As machine learning becomes a standard part of enterprise systems, the challenge is no longer building models. It is building systems that remain reliable, scalable, and accurate over time.

Successful machine learning software development requires strong engineering across:

Without these components, even high-performing models struggle to deliver consistent business value in production.

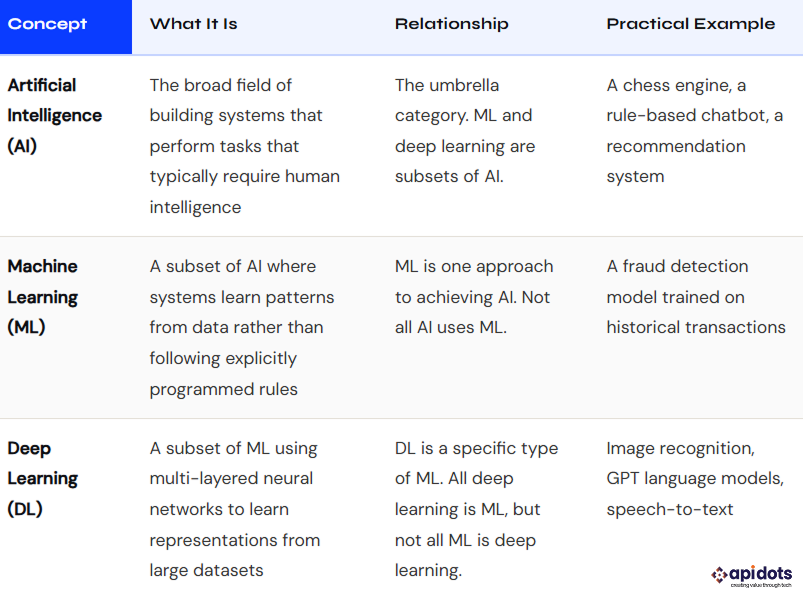

Why it matters for software development: Most business ML problems, such as fraud scoring, churn prediction, demand forecasting, and document classification, are solved with classical ML (XGBoost, logistic regression, and random forests), not deep learning. Deep learning requires significantly more data, compute, and engineering complexity. Choose the simplest approach that solves the problem. Save deep learning for problems that actually need it: images, audio, video, and large-scale NLP.

Machine learning is not a replacement for traditional software. It is an extension that enables systems to make data-driven decisions where rules are too complex or constantly changing.

In modern applications, machine learning is typically used for prediction, classification, ranking, and automation tasks that cannot be solved efficiently with rule-based logic.

In most production systems, machine learning operates alongside traditional software. The application handles business logic, APIs, and user experience, while the ML system provides predictions or decisions that enhance those workflows.

| Dimension | Traditional Software | ML-Powered Software |

| Core Logic | Explicitly written by engineers using rules and conditions | Learned automatically from training data |

| Testing | Unit tests with deterministic outputs | Statistical evaluation using accuracy, precision, recall, and AUC |

| Versioning | Code versioning (e.g., Git) | Versioning of code, data, and models together |

| Deployment | Ship new code to replace old code | Deploy new models trained on updated data, with different failure modes |

| Degradation | Fails visibly when code breaks | Degrades silently due to data drift or changing real-world patterns |

| Debugging | Debugging via stack traces and logs | Requires model explainability tools and data distribution analysis |

Machine learning creates the most impact when applied to high-volume decisions, pattern recognition, and automation tasks. These are areas where rule-based systems break down, and data-driven models perform better.

Here are the most common and high-impact machine learning use cases in business:

Machine learning analyzes user behavior to deliver personalized content, products, and experiences in real time.

Used by platforms like Netflix, Spotify, and Amazon, recommendation systems drive user engagement and revenue growth.

Business impact:

ML models evaluate transactions in real time using historical patterns and behavioral signals. These systems can process millions of decisions per second with high accuracy.

They are widely used in fintech and banking environments, especially for real-time risk analysis and anomaly detection. For a deeper look, see AI/ML development in finance and manufacturing.

Business impact:

Machine learning predicts future outcomes such as customer churn, product demand, and equipment failure using historical data.

This is commonly applied in finance, retail, and manufacturing environments. It also plays a key role in inventory optimization. For example, the AI inventory management guide shows how companies use ML to forecast demand and reduce stock inefficiencies.

Business impact:

NLP models extract meaning from unstructured text, enabling features such as document classification, sentiment analysis, contract review, and intelligent search.

These capabilities are widely used across SaaS platforms and enterprise workflows, especially as companies scale automation through AI integration in SaaS.

Business impact:

Computer vision systems analyze images and video for tasks such as quality inspection, defect detection, and visual search.

Common in manufacturing, healthcare, and e-commerce.

Business impact:

While these use cases highlight where machine learning delivers value, implementing them successfully requires a structured engineering approach.

To understand how these systems are built and deployed in practice, it is important to break down the full machine learning development lifecycle.

Machine learning software development follows a structured lifecycle, but unlike traditional software, most complexity lies after model training. The biggest risks and failures typically occur during deployment, monitoring, and maintenance.

A production-ready ML system requires six interconnected phases, each with its own engineering challenges.

Data is the foundation of every machine learning system. Model performance is directly limited by the quality, consistency, and governance of the data used for training.

Key considerations:

Data quality is closely tied to governance and compliance. Issues such as bias, leakage, and poor data handling can significantly impact model reliability. These challenges are often linked to broader AI data privacy risks that organizations must address when building production systems.

Poor data quality remains the most common reason machine learning models fail in production.

Feature engineering transforms raw data into structured inputs that models can learn from. In most real-world systems, this step has a greater impact on performance than model selection.

Best practices:

Well-designed features improve both model accuracy and system reliability.

Model selection should be guided by business constraints such as latency, interpretability, and infrastructure cost, not just accuracy.

Key practices:

Model development also involves continuous experimentation and iteration. In practice, teams often face challenges in debugging pipelines, training workflows, and model outputs. These issues are similar to those encountered in debugging AI-generated code, where small inconsistencies can lead to unreliable results.

In many business scenarios, simpler models are easier to maintain, deploy, and scale, making them more effective for long-term use.

Evaluating a model in production requires more than accuracy metrics. The focus should be on business impact and real-world performance.

Important factors:

A model that performs well offline may still fail under real production conditions.

Deployment is where most machine learning projects fail. Moving from a working model to a reliable production system requires strong engineering practices.

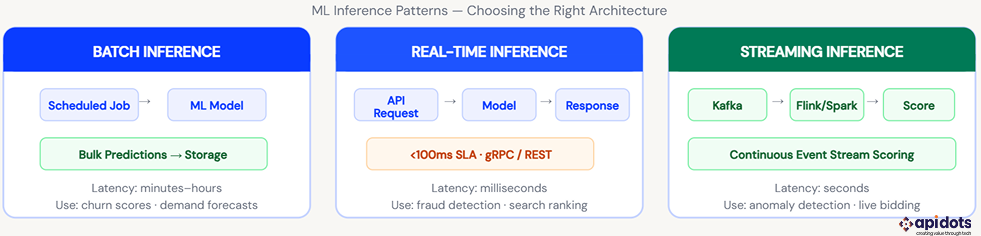

Common deployment patterns:

Best practices:

A successful deployment ensures the model delivers value under real-world conditions.

Machine learning systems degrade over time as data and real-world conditions change. Continuous monitoring is essential to maintain performance.

What to monitor:

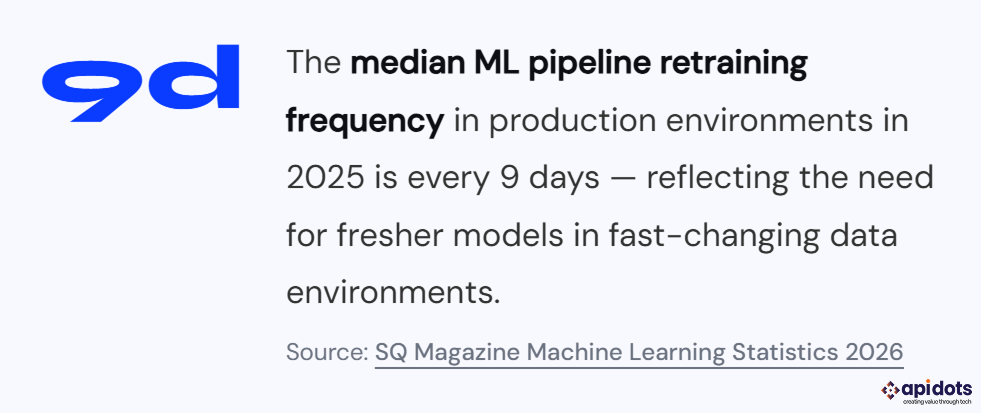

Retraining strategies:

Without monitoring, performance issues often go unnoticed until they impact business outcomes.

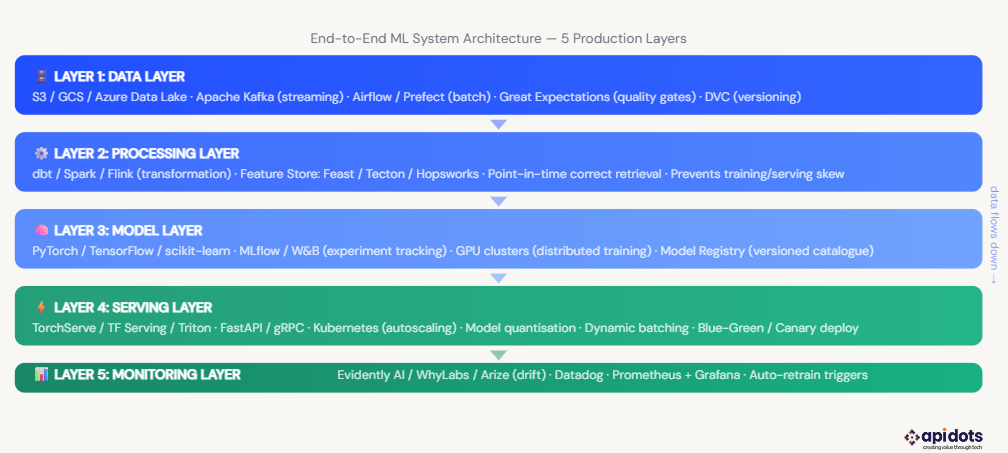

A production machine learning system is not just a model. It is a set of interconnected components that work together to deliver reliable predictions in real-world environments.

Most production ML systems follow a five-layer architecture:

Each layer plays a critical role. Failures in data quality, feature consistency, or monitoring can cause models to degrade even if they perform well during development.

While this architecture defines how ML systems are structured, running them reliably at scale requires automation and operational discipline.

This is where MLOps becomes essential.

MLOps is the practice of managing, automating, and scaling machine learning systems in production. It applies DevOps principles to machine learning, ensuring that models are not only built but also continuously deployed, monitored, and improved over time.

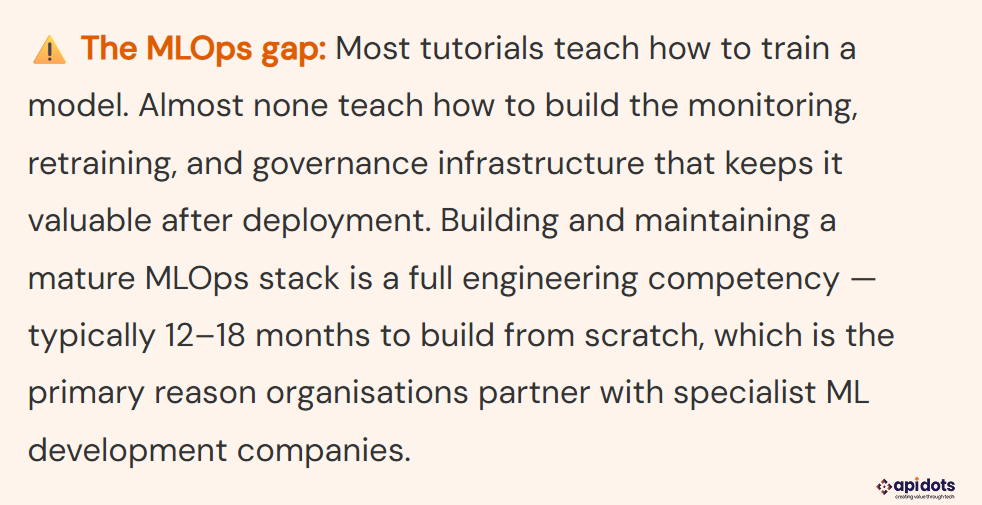

The MLOps market reached $1.7 billion in 2024 and is projected to reach $129 billion by 2034, with a 43% CAGR. That growth reflects how acutely organizations feel the absence of this discipline when they try to scale ML beyond experimental notebooks.

Without MLOps, machine learning workflows are manual and difficult to scale. Models are retrained inconsistently, deployments become risky, and performance issues often go undetected.

Most machine learning failures happen after deployment, not during model development.

MLOps addresses this by introducing automation and standardization across the entire lifecycle of machine learning systems.

Key benefits include the following:

A production-ready MLOps setup includes several key components:

These components ensure that machine learning systems remain stable, reproducible, and continuously improving.

Machine learning systems require validation of both code and data.

In a production ML pipeline:

This creates a continuous loop where models are regularly updated and evaluated without manual intervention.

While MLOps enables automation and reliability, building these systems also depends on choosing the right tools and platforms.

The next section covers the key machine learning tools and technologies used in modern development.

Choosing the right tools is essential for building scalable and production-ready machine learning systems. In practice, the most important decisions are not about algorithms, but about infrastructure, deployment, and system reliability.

These frameworks are used to build and train machine learning models.

| PyTorch | Deep learning | Neural networks, NLP, computer vision |

| TensorFlow | Enterprise ML | Production pipelines and large-scale systems |

| scikit-learn | Classical ML | Structured data and simpler models |

| XGBoost / LightGBM | Tabular data | Forecasting, fraud detection, scoring |

For most business applications, classical machine learning frameworks are often more efficient and easier to deploy than complex deep learning models.

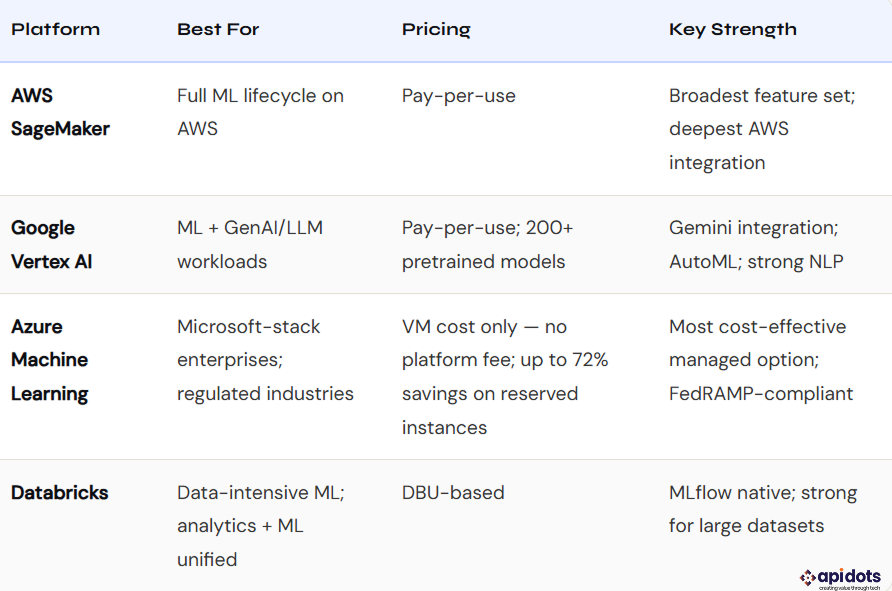

Cloud platforms provide managed infrastructure for training, deployment, and scaling machine learning systems.

These platforms reduce operational complexity and accelerate time to production.

While tools and platforms enable development, the key question for most businesses is cost and return on investment.

The next section breaks down machine learning use cases by industry.

ML delivers the most commercial value when embedded at the core of a product, not bolted on as a feature.

AI-native SaaS embeds ML as the primary value driver, not an add-on. Churn prediction identifies at-risk accounts before they downgrade. Usage-based anomaly detection flags security incidents in real time. Intelligent automation observes repetitive user actions and suggests workflows. NLP engines extract structured data from free-form inputs. SaaS products with embedded ML report 2–4× higher net revenue retention versus feature-equivalent non-ML products.

Read more: To get more insights about building AI-native SaaS architecture, refer to our complete guide to AI-first SaaS product development.

Financial services ML runs on three primary workloads: real-time fraud scoring at transaction authorization (millisecond latency, XGBoost, and gradient boosting dominate), credit underwriting using thousands of non-traditional data signals beyond credit bureau data, and algorithmic trading systems making microsecond position decisions. Compliance in this sector requires model explainability—regulators demand interpretable credit decisions, constraining model selection toward ensemble methods with SHAP explanations over black-box deep learning.

ML drives every layer of the e-commerce experience. Search ranking surfaces the most relevant results per user intent. Recommendation engines (collaborative filtering, neural collaborative filtering) personalize every surface. Dynamic pricing adjusts in real time based on inventory, competitor signals, and demand. Inventory optimization forecasts demand by SKU, location, and season — reducing overstock costs by 20–30%.

Read more:

Healthcare ML carries the highest compliance burden and the highest stakes. Clinical decision support surfaces diagnostic insights from imaging, labs, and clinical notes — requiring FDA clearance for most diagnostic applications. Administrative ML automates prior authorization, medical coding, and clinical documentation. Healthcare is the fastest-growing vertical in ML software development at 52.7% CAGR through 2033 — but it demands HIPAA compliance architecture from the first line of code.

The next section explains the common challenges in machine learning software development.

Machine learning projects often fail not because of poor models, but because of challenges in data, deployment, and long-term system management.

Understanding these challenges early helps organizations avoid costly mistakes and build production-ready ML systems.

Poor data quality is the most common reason machine learning models fail in production.

Incomplete, inconsistent, or biased data directly impacts model accuracy and reliability. Strong governance is required to ensure data integrity across the entire pipeline.

Key risks:

Without proper data validation and governance, even well-designed models fail in production.

A common production issue occurs when features are computed differently during training and inference.

This leads to inconsistent predictions and silent performance degradation.

Key risks:

This issue is often difficult to detect and can significantly impact system reliability.

Moving a model from experimentation to production requires engineering expertise beyond data science.

Many teams struggle with building APIs, scaling infrastructure, and integrating ML systems into existing applications.

Key risks:

These challenges are a major reason why many ML projects fail to reach production.

Machine learning models degrade over time as real-world data changes.

Without monitoring, this decline often goes unnoticed until it affects business outcomes.

Key risks:

Continuous monitoring and retraining are required to maintain performance.

Machine learning systems can become expensive due to compute, storage, and scaling requirements.

Costs increase rapidly with model complexity and production traffic, especially without proper planning and optimization.

Key risks:

Proper architecture and MLOps practices are essential to control costs.

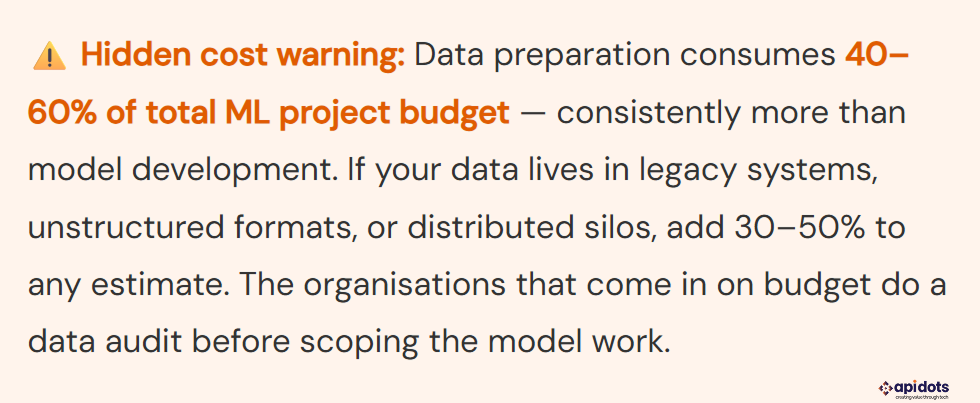

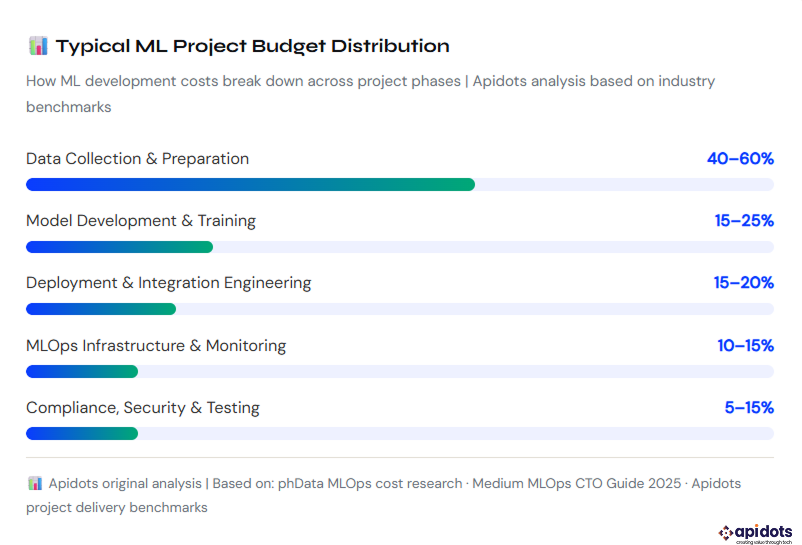

Team cost is the largest variable. Data preparation is the most underestimated. Maintenance cost is the one most proposals leave out entirely.

Cost is the question that determines whether an ML project gets approved. Here is a direct, honest breakdown of what machine learning software development actually costs in 2026.

| Role | Average US Salary (2026) | Primary Contribution |

| ML Engineer | $160K–$248K | Pipelines, serving infrastructure, MLOps systems |

| Data Scientist | $129K–$159K | Feature engineering, model training, and evaluation |

| MLOps Engineer | $155K–$220K | CI/CD for ML, monitoring, retraining automation |

| Data Engineer | $130K–$185K | Data pipelines, feature stores, and data quality. |

| Senior Bay Area ML Engineer | $225K+ base / $400K+ total comp | Production LLMOps, inference optimization |

| Cloud ML Training (GPU) | $500–$15,000/month | Varies by model size, training frequency, GPU type (A10G vs A100) |

| Model Serving Endpoint | $450–$2,500/month | Always-on instance; scales with traffic volume |

| Feature Store Infrastructure | $200–$1,500/month | Redis for online serving + managed DB for offline store |

| MLOps Platform (Managed) | $0–$5,000/month | Azure ML: VM cost only. SageMaker / Vertex: usage-based. MLflow: self-hosted, free |

| Data Storage & Pipelines | $100–$3,000/month | S3/GCS data lake + Airflow + data transfer |

| Monitoring Tools | $200–$2,000/month | Datadog, Evidently AI, or WhyLabs for drift detection and alerting |

A production ML system is not a one-time build cost. Ongoing maintenance includes: scheduled or trigger-based model retraining (compute + engineer time), data pipeline maintenance as upstream schemas change, model performance reviews against new ground-truth labels, and infrastructure updates as cloud provider APIs evolve. Industry benchmarks suggest ongoing ML maintenance runs 15–25% of the initial build cost annually, and higher for models in high-velocity data environments where retraining is frequent. Budget for it explicitly, or it becomes an invisible tax on engineering bandwidth.

| Project Scope | Typical Cost Range | Timeline | Best For |

| ML Proof of Concept | $15,000 – $60,000 | 4–8 weeks | Validating ML feasibility before committing to a production build |

| Single Production ML Feature | $50,000 – $200,000 | 8–16 weeks | Adding one ML capability to an existing product (e.g., churn prediction) |

| Full ML System with MLOps | $150,000 – $600,000 | 4–9 months | End-to-end ML platform with pipelines, monitoring, and retraining |

| Enterprise ML Platform | $500,000 – $2M+ | 9–24 months | Organization-wide ML infrastructure across multiple teams and use cases |

In machine learning development, the first four weeks determine whether your system scales or fails. Most failures are not caused by weak models, but by poor architectural decisions made early.

At API DOTS, our machine learning development services focus on building systems that are production-ready from day one — not just models that work in isolation.

Successful machine learning software development starts with clarity, not code.

Before building anything, define:

If these are unclear, the project will likely stall at the PoC stage. Strong machine learning development services always begin with well-defined use cases and measurable outcomes.

In real-world machine learning development, the MVP is not the most accurate model — it is the fastest way to deliver value in production.

The goal is simple: get predictions in front of users and start collecting feedback data.

A basic model deployed with a lightweight API is more valuable than a complex model stuck in experimentation.

Best practices:

This is where most machine learning development services fail: they optimize for accuracy instead of real-world usability.

A practical framework to start machine learning development, whether you’re building in-house or working with a partner.

Do not start with “we want to use machine learning.” Start with a clear business problem like reducing customer churn or improving demand forecasting. Your use case must have measurable outcomes and historical data, as this will guide every decision that follows.

Before writing any code, evaluate your data. Check data availability, quality, structure, and compliance requirements. A proper data audit helps define realistic timelines, costs, and feasibility far better than assumptions.

Organizations typically choose between three approaches:

For many businesses, partnering with an experienced team helps reduce risk and accelerate time to value. For example, companies often work with providers like API DOTS to design, build, and deploy machine learning systems with a focus on production readiness, MLOps, and long-term scalability.

The right approach depends on your internal capabilities, timeline, and business priorities.

Start with a 4–8 week PoC using real data. The goal is to validate feasibility, data readiness, and business impact. Even if it uncovers data issues, it saves significant time and cost before full-scale development.

The biggest misconception in machine learning software development is that the model is the product. After all the above discussion, we know now that’s not the case. The model is only one component of a much larger system that includes data pipelines, feature engineering, deployment infrastructure, monitoring, and continuous retraining. Most failures happen not because the model is wrong, but because the system around it is incomplete or unreliable.

This is why machine learning should be treated as a software engineering discipline, not a one-time data science project.

The organizations that succeed with machine learning are not the ones using the most complex algorithms. They are the ones who build systems that work reliably in production, adapt to changing data, and deliver measurable business outcomes over time.

If you are evaluating machine learning for your business, the focus should not be on which model to use but on how the entire system will be designed, deployed, and maintained.

Because in the end, machine learning does not create value in notebooks. It creates value in production.

Machine learning software development is the process of building systems that learn from data to make predictions, automate decisions, and improve over time in production environments.

Traditional software uses fixed rules defined by developers, while machine learning systems learn patterns from data. This makes ML systems probabilistic, harder to test, and dependent on data quality and monitoring.

A production machine learning system typically includes five components:

The cost varies based on system complexity, data size, and infrastructure requirements. Projects can range from $10,000 for a proof of concept to $500,000+ for a full production system, with ongoing maintenance costs of 15–25 percent annually.

Most ML projects fail due to poor data quality, lack of MLOps, deployment challenges, and missing monitoring systems rather than issues with the model itself.

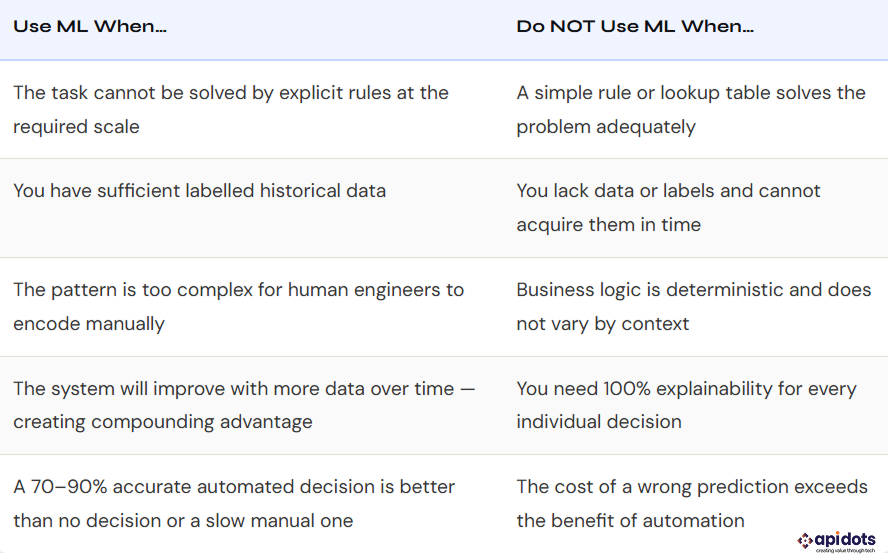

Machine learning is most useful when:

Timelines vary depending on scope:

It depends on internal capabilities. Companies with strong data and engineering teams may build in-house, while others often partner with experts to reduce risk, cost, and time to deployment

We leverage AI, cloud, and next-gen technologies strategically.Helping businesses stay competitive in evolving markets.

Consult Technology Experts

Hi! I’m Aminah Rafaqat, a technical writer, content designer, and editor with an academic background in English Language and Literature. Thanks for taking a moment to get to know me. My work focuses on making complex information clear and accessible for B2B audiences. I’ve written extensively across several industries, including AI, SaaS, e-commerce, digital marketing, fintech, and health & fitness , with AI as the area I explore most deeply. With a foundation in linguistic precision and analytical reading, I bring a blend of technical understanding and strong language skills to every project. Over the years, I’ve collaborated with organizations across different regions, including teams here in the UAE, to create documentation that’s structured, accurate, and genuinely useful. I specialize in technical writing, content design, editing, and producing clear communication across digital and print platforms. At the core of my approach is a simple belief: when information is easy to understand, everything else becomes easier.