Top

Search

People also search for:

- Home

- AI Data Privacy: Risks, Compliance, and How to Protect Sensitive Data

With time, new risks and challenges are emerging. I believe we did not “fully think AI through” in the beginning. When the technology first appeared, we knew very little about its long-term implications. Now, with each passing day, we are uncovering new risks.

One of the most important concerns is data privacy. People are interacting with AI systems in one-on-one conversations without realizing that they may be sharing excessive personal information. This information could potentially be misused or used to train AI models.

The question is: did we truly sign up for this?

AI Data Privacy concerns are rising rapidly. It falls on the organization to embed ethical and responsible data practices into its systems. Organizations that are adopting AI must balance innovation with responsible data practices. Without proper governance, AI systems can expose sensitive data, violate privacy regulations, and damage customer trust.

Moreover, companies that are building AI-powered platforms should also understand how machine learning integrates into modern software systems. To learn more deeply, refer to our guide on AI and machine learning in SaaS applications, which explains how AI models interact with large datasets and how companies can manage data responsibly during development.

Now we have made our point clear that there is a need for responsible AI, now let dig deep into our topic and deliver the key points and what they mean.

AI data privacy is the protection of personal and sensitive information used by artificial intelligence systems during data collection, processing, training, and deployment.

Unlike traditional software systems, AI models require large datasets to learn patterns and improve performance. These datasets may contain personal information such as user behavior, location data, financial transactions, or health records.

AI data privacy focuses on ensuring that this information is handled responsibly. This includes protecting data from unauthorized access, ensuring transparency about how data is used, and complying with global data protection regulations.

Organizations must also ensure that their AI systems do not expose sensitive information through model outputs or data leaks. As AI technologies evolve, maintaining strong privacy safeguards has become an essential part of building trustworthy AI systems.

Artificial intelligence introduces several privacy challenges that do not typically exist in traditional software systems.

Because of these factors, organizations must develop strong governance frameworks to ensure responsible AI usage. Businesses adopting AI technologies should also understand the challenges associated with implementing AI in real-world environments.

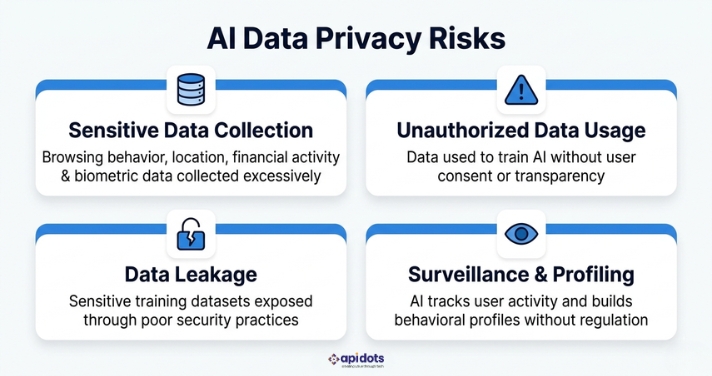

Organizations deploying AI systems must understand the privacy risks associated with data-driven technologies.

AI systems often collect large amounts of personal data, including browsing behavior, location information, financial activity, and biometric identifiers. Collecting excessive data increases privacy risks and expands the potential attack surface.

Data collected for one purpose may later be used to train AI systems without proper user consent. This raises concerns about transparency and user control over personal information.

Improper security practices may expose sensitive training datasets. In some cases, attackers may even extract confidential information from trained AI models.

AI-powered analytics systems can track user activity and build behavioral profiles. If not properly regulated, this capability may lead to surveillance practices that violate privacy expectations.

Organizations building AI-driven platforms must design secure system architectures to minimize these risks. Understanding different software architecture models can also help companies structure their platforms more securely.

To reduce privacy risks, organizations must integrate privacy considerations throughout the AI development lifecycle. Some ways to do this are as follows.

Organizations should collect only the data necessary to achieve the intended purpose of an AI system. Limiting data collection reduces exposure and minimizes risk.

Privacy protections should be integrated into system architecture from the beginning rather than added later as an afterthought. Designing AI systems with built-in privacy safeguards improves security and compliance.

Users should understand how their data is collected, processed, and used by AI systems. Transparency builds trust and ensures organizations remain accountable.

Strong governance frameworks are necessary to monitor AI systems and ensure compliance with internal policies and regulatory requirements.

Organizations must define clear responsibilities for AI decision-making and data handling. Accountability ensures that privacy violations can be addressed quickly and effectively.

Businesses building AI-powered products should integrate these principles throughout their development process. Our blog on enterprise web development processes and benefits explains how structured development workflows can improve security, scalability, and governance.

💡 Is your organization’s AI system built with privacy compliance in mind? At APIDOTS, we help businesses design and develop AI-powered software with privacy-by-design principles baked in from day one. Talk to our team →

Governments around the world are introducing regulations that govern how organizations collect, process, and store personal data.

One of the most influential regulations is the General Data Protection Regulation (GDPR)in the European Union. GDPR establishes strict requirements for transparency, consent, and data protection.

In the United States, the California Consumer Privacy Act (CCPA) gives individuals greater control over how their personal information is collected and used by organizations. Other regions are also introducing privacy laws that regulate digital data and AI systems. As a result, organizations must design their AI platforms with compliance in mind.

The stakes around data protection remain extremely high. According to IBM’s 2025 Cost of a Data Breach Report, the average cost of a data breach reached USD 4.4 million. Beyond financial losses, such incidents also damage something far harder to measure: public trust in a company’s brand.

It is important that companies developing AI-powered software products understand how regulatory requirements affect software architecture and development costs.

Our guide on custom software development costs explores how compliance, security, and infrastructure considerations influence development budgets.

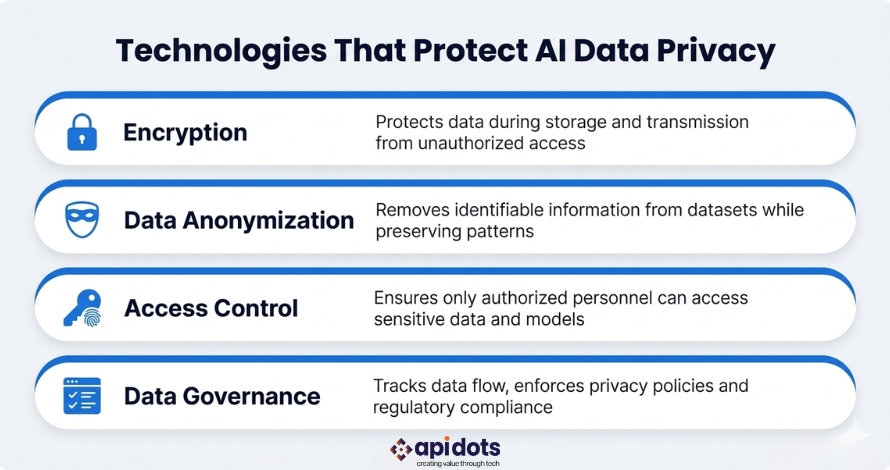

Several technologies and security practices help organizations protect sensitive data used in AI systems.

Encryption protects data during storage and transmission. By encrypting sensitive information, organizations reduce the risk of unauthorized access.

Anonymization removes identifiable information from datasets while still allowing AI models to analyze patterns.

Identity and access management systems ensure that only authorized personnel can access sensitive data and training datasets.

Data governance tools track how data flows through systems, enforce privacy policies, and help organizations maintain regulatory compliance. Organizations launching AI-powered software products often combine these technologies with structured product development strategies.

Protecting data privacy is not only a legal requirement but also a strategic advantage for businesses.

Organizations that prioritize privacy protection build stronger relationships with customers and partners. Transparent data practices increase trust and reduce concerns about how personal information is handled. Strong privacy protections also reduce the risk of costly data breaches, regulatory penalties, and reputational damage.

Businesses that implement responsible AI practices are better positioned to innovate while maintaining compliance with evolving regulations.

It is evident that artificial intelligence offers enormous potential to improve business operations, automate decision-making, and generate valuable insights. However, these benefits come with new responsibilities related to data protection and privacy.

Organizations must ensure that AI systems handle data responsibly, protect sensitive information, and comply with global privacy regulations. It is our basic human right to have a credible data privacy system that ensures our safety.

By implementing strong governance frameworks, adopting privacy-preserving technologies, and following responsible AI principles, businesses can build AI systems that deliver innovation while maintaining trust and accountability.

🚀 Ready to build AI software that’s secure, compliant, and privacy-first? APIDOTS specializes in developing responsible AI-powered platforms tailored to your business needs. Get in touch with us today →

What is AI data privacy?

AI data privacy refers to protecting personal and sensitive data used in artificial intelligence systems during data collection, processing, and training.

Why is data privacy important in AI?

AI systems often process large volumes of personal information. Protecting this data is essential to prevent misuse, security breaches, and regulatory violations.

What are the main AI privacy risks?

Common risks include excessive data collection, unauthorized data usage, model data leakage, and behavioral profiling.

How can companies protect AI data?

Organizations can protect AI data through encryption, anonymization, strong governance frameworks, and privacy-by-design development practices.

Do AI systems need to comply with privacy regulations?

Yes. AI systems must comply with regulations such as GDPR, CCPA, and other regional data protection laws that govern how personal information is collected and processed.

We leverage AI, cloud, and next-gen technologies strategically.Helping businesses stay competitive in evolving markets.

Consult Technology Experts

Hi! I’m Aminah Rafaqat, a technical writer, content designer, and editor with an academic background in English Language and Literature. Thanks for taking a moment to get to know me. My work focuses on making complex information clear and accessible for B2B audiences. I’ve written extensively across several industries, including AI, SaaS, e-commerce, digital marketing, fintech, and health & fitness , with AI as the area I explore most deeply. With a foundation in linguistic precision and analytical reading, I bring a blend of technical understanding and strong language skills to every project. Over the years, I’ve collaborated with organizations across different regions, including teams here in the UAE, to create documentation that’s structured, accurate, and genuinely useful. I specialize in technical writing, content design, editing, and producing clear communication across digital and print platforms. At the core of my approach is a simple belief: when information is easy to understand, everything else becomes easier.